The term ‘serverless’ suggests systems with no back-end or that no servers are used. This terminology is very misleading, because serverless architecture certainly includes a back-end. The difference is that the users or programmers who are supposed to develop an application no longer have to deal with the servers.

Serverless computing is a cloud computing system. There are different levels of cloud computing. The highest level would be serverless computing, also called Function as a Service (FaaS). In FaaS, everything is considered to be below the business logic. This includes server, network, database, possibly virtualization levels, operating system, runtime environment, data and also the application. Only the business logic with the functions has to be implemented by the user. The difference to the traditional computing systems is that the users or the programmers who are supposed to develop an application no longer have to deal with the servers. Neither does it matter to them what is happening on the lower OS levels or how the servers are managed or protected. Likewise, the user does not have to worry about particular aspects such as scalability and questions about specific hardware or used middleware services. It applies the underlying environment.

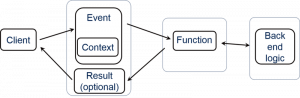

The typical structure of a serverless computing architecture is shown in the diagram below:

There is a function that, because of an event, is started in a specific context and calls business logic or other back-end services. Its result is then returned asynchronously. The functions are called either synchronously via the classic request / response model or asynchronously via events. In order to avoid a close coupling of the individual functions and to optimize the resource requirement at runtime, the asynchronous variant should be preferred.

How does it help security?

1. Management of OS patches is not necessary

Using FaaS, the underlying platform handles the server for the user, so relieving its deployment, management and monitoring.

FaaS also assumes responsibility for “patching” these servers, which means updating the operating system and its dependencies to secure versions when they are affected by newly disclosed security vulnerabilities. Known vulnerabilities in unpatched servers and applications are the main cause of system exploitation.

‘Serverless’ therefore shifts the risk of the unpatched server from the user to the “professionals” who operate the platform.

2. Short-lived servers are less at risk of foreign intervention

An important limitation is that the serverless computing features are stateless. In an FaaS environment, the user does not know and does not have to worry about which server is responsible for performing a function. The platform provides servers and disables them as they think best.

Serverless does not give the attackers the luxury of time. By repeatedly resetting the machine, the attacker must always make compromises and each time face the risk of failure or threat. Stateless and short-lived systems, including all FaaS functions, are therefore inherently less at risk of external intervention.

3. Denial-of-Service resistance through extreme elasticity

FaaS provides functions immediately and seamlessly. This automated setup leads to extreme elasticity, so no servers need to be run.

This scalability also protects from external manipulation because attackers often try to clean up systems by submitting a large amount of compute- or memory-intensive operations which maximise server capacity and stop legitimate users from using the application.

More enquiries – whether good or bad – would lead the platform to provide more ad hoc servers, which would then ultimately try to prevent the problem of denial of service / server overload.

How does it hurt security?

1. Stronger dependence on external services

Serverless apps are practically never created only on FaaS. They are usually based on a network of services connected by events and data. While some of them are their own functions, many are operated by others. In fact, the reduced size and statelessness of functions is leading to a significant increase in the use of third party services, both cloud platforms and external services.

Any third-party service is a potential compromise point. These services receive and deliver data, influence workflows, and provide extensive and complex input into our system. If such a service proves to be malicious, it can often cause significant damage.

2. Each function expands the attack surface

While functions are technically independent, most are called in a sequence. As a result, many functions begin by assuming that another function is performed before them, and in some way clean up the data. In other words, functions begin to trust their input, believing that it comes from a trusted source.

This approach makes security extremely vulnerable. Firstly, these functions can be called up directly by an attacker. Secondly, because the function can be added to a new flow later, which does not clean up the input. And thirdly, because an attacker can invade one of the other functions and then have easy and direct access to a poorly defended peer.

3. Simple deployment leads to an explosion of functions

The designation of functions is very simple. It is automated and costs nothing, as long as the function is not heavily used. At such a low cost, we do not ask where we should use it, but rather, why shouldn’t we use it? As a result, many functions are inserted, even if many of them are rarely used. Finally, the implemented functions are very hard to remove because you never know what depends on their existence.

With excessive privileges that are similarly difficult to reduce, there is an explosion of hard-to-remove, overly powerful functions that ultimately provide the attackers with a rich and ever-growing attack surface.

Summary

Like any system, serverless computing has its strengths and weaknesses. On the one hand, it facilitates the work of the user through the automatic scaling, extreme elasticity and automatic management of the OS patches. The user does not have to worry about the server, which is very helpful for him.

But like any other system, serverless computing has some security holes that can lead to low to high damage. Above all, the server is very vulnerable to external interference, as there is a large attack surface.

The user should therefore be aware that the security issues are not necessarily covered by the use of serverless computing. Serverless computing is certainly not suitable for high individual monitoring and individual control of the server. But for ease of control and management of the server, this is an optimal solution. Only the user should know that serverless computing is not necessarily the safest system.

Research Questions

- How high is the elasticity?

- Does it really have endlessly expandable capacity?

- Are data at risk during transmission?

- Can they be safely protected?

- Are granular permissions even being managed for hundreds or thousands of functions?

- Is it feasible?

- Are users at all concerned with security issues when FaaS automatically handles server-level security concerns?

Leave a Reply

You must be logged in to post a comment.