Abstract

Nowadays, our secure systems are already sophisticated and perform well. In addition, research on subjects such as quantum computers ensures continuous improvement. However, even with a completely secure system, we humans pose the most significant threat. Social engineers prey on this to conduct illegal activities. For early detection and prevention, this paper deals with the analysis and discussion of social engineering attacks. The major challenge is to balance trust and mistrust. However, this threshold varies depending on the application. Therefore, it is advisable to extract patterns from past incidents and to recognize them in future scenarios. First, the basic principles and techniques of social engineers are introduced. Three different models are then analyzed. The effects of social networks and the feasibility of the models are outlined in the 58th US election. Finally, possibilities for avoidance, prevention and recovery are discussed.

Table of contents

List of figures

- The missing link

- Survey cybercrime

- Mitnick attack cycle

- Attack cycle

- Defense cycle

- Victim cycle

- Cycle of deception

- Spherical view

- Attack classification

- Ontological model

- Framework

- Role structure applied on 58th US presidential election

- Framework applied on 58th US presidential election

Motivation

In times of fake news, manipulation of the presidential elections in the USA, simple procurement of hacking attacks in the dark net and increasing transparency of identities, system security is a fundamental factor for safeguarding privacy and preventing economically justified data theft.

With the current state of the art, computer systems can be satisfactorily protected at both the software and hardware levels. In the future, these could be further strengthened with the potential of quantum computers in order to take the next step in IT Security.

Our non-autonomous systems will always tend to have a weak point, which is us, the humans. Figure 1 illustrates the missing connection between the different types of security and points to the problem of human security in our systems.

|

|---|

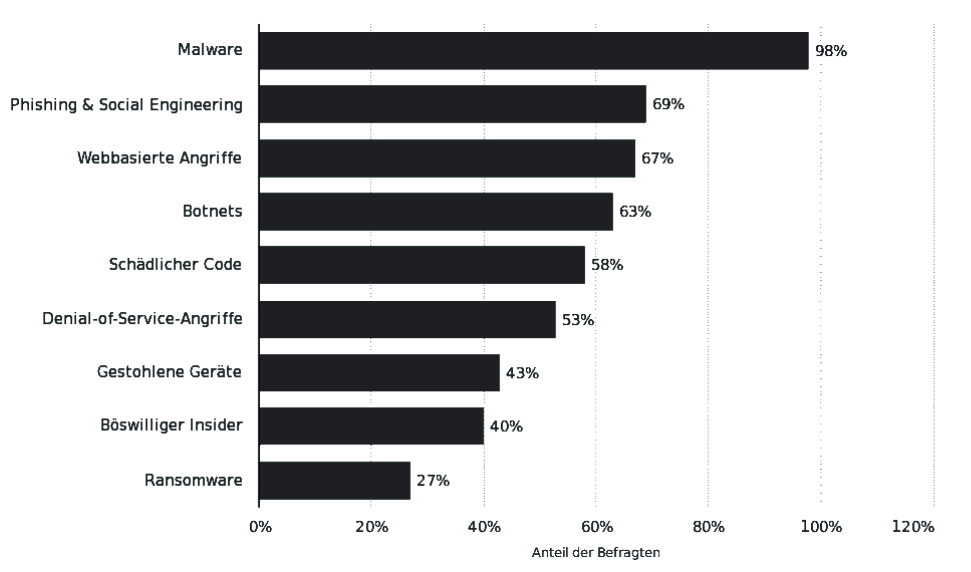

Social engineers take advantage of this lack to benefit themselves. Social engineering has a far-reaching history that goes back to 1970 for phreaking until today with ingenious complex attacks. Even today, social engineering is one of the biggest threats to businesses, alongside malware. This is shown in figure 2, which is the result of a survey on cybercrime among 254 international companies.

|

|---|

Social engineering is therefore a global phenomenon and a major risk for a large number of companies. For this reason, it is important to deal with the topic.

Definition

There is no explicit definition of social engineering. The first known definition is from Quann and Belford and already fulfills the core statement quite accurately.

An attempt to exploit the help desks and other related support services normally associated with computer systems

It’s about exploiting help desks that are connected to computers.

Obviously, there is the most widespread definition of Kevin Mitnick.

Using influence and persuasion to deceive people and take advantage of their misplaced trust in order to obtain insider information

It states that people can be tricked by conviction to divulge secret information[8].

During the ongoing research, the so far most suitable definition was discovered. It is explained in the following.

Any act that influences a person to take an action that may or may not be in their best interest

This indicates that social engineering exists when a person is influenced by an action that is in the interest of the person or not.

It is even more generic and also points out that the manipulation does not necessarily have to be negative.

For example, educational measures also count as social engineering[5].

Research questions

This paper addresses the following questions, as outlined in the table of contents. These will be answered and discussed throughout.

- What are the key features and techniques?

- To what extent can attacks be mapped into models?

- What is the value of the models?

- How reliable are these?

- What opportunities do social media offer?

- Did Donald Trump hack himself into presidency?

- What are countermeasures?

- What is the impact on companies?

Principles & Techniques

The principles distinguish between compliance and psychological ones.

First, we will look at Cialdini’s six key compliance principles. These principles make people submissive.

- Friendship or sympathy, the concept behind this principle is that people tend to fulfill a wish of friends rather than strangers.

- Commitment or consequence means people are inclined to honor their commitments. In other words, if a person has already been helped once, we also help with new inquiries, because it is a matter of course.

- Scarcity depicts that rare or urgent requests are more likely to be met.

- Reciprocity states people help when they’ve been helped previously.

- Social validation means people assist someone when it is considered socially correct.

- Authority believes people tend to obey authority figures, even if their actions are offensive[6].

The four psychological principles are used by social engineers to better read and specifically manipulate human emotions.

- Microexpressions state the interpretation of human emotions is essential.

- Instant rapport defines directives to build a harmonious relationship with the interlocutor. This includes advice such as a friendly body language or to give your conversation partner the feeling of being needed.

The goal is to gradually build up a false friendship, so a constant flow of information is achieved. - Interview & interrogation focuses on the analysis of body language. Decisive factors are posture, skin tone, eye characteristics, voice, choice of words or even hands. Besides that, an intriguing observation is in stressful situations the sinus membrane in the face dries out. This causes people to touch their faces more frequently.

In addition, based on the four common personality types such as active extrovert or introverted, an interview can be adjusted. - The human buffer overflow means certain conditions are expected in the mind, just like in software. This allows implicit commands. With the help of other contexts as well as mental padding, implicit commands can be utilized more easily[5].

There is a wide variety of techniques for social engineering attacks. To deal with all these techniques would exceed the scope of this paper. Therefore, the most common and most relevant are outlined next.

- Phreaking is one of the earliest techniques. Phone Phreaks understood the telephone network better than support employees and could make free phone calls with so-called blue boxes. The trick was to play a sound used for call forwarding.

- Phishing is the best known technique. This includes spamming, emails, social media etc.

- Spear Phishing is when few personalized emails are sent to specific destinations. A higher success rate of approx. 50% is achieved, of course with greater effort.

- Baiting corresponds to a real Trojan horse. The attacker leaves infected hardware in places, so the victim connects the hardware out of curiosity.

- At Dumpster Diving, the victim’s garbage is searched for information. This is especially relevant for IT companies.

- Water holing indicates that potential victims click more frequently on links they know. The attacker sends malware with fake websites that the victim frequently visits.

- Quid pro quo assumes that the attacker randomly dials phone numbers at one of the target organizations and pretends to be their support in order to resolve a problem.

- Piggybacking means the attacker gains access to a building without identification by simply following a person with identification. The person will open the door as a courtesy[5][8][7].

Models

This section outlines three different models for social engineering attacks. The first is the well-known Mitnick attack cycle. The second model is by Nohlberg and Kowalski. The third ontological model is provided by Mouton et. al.

Model 1: Mitnick attack cycle

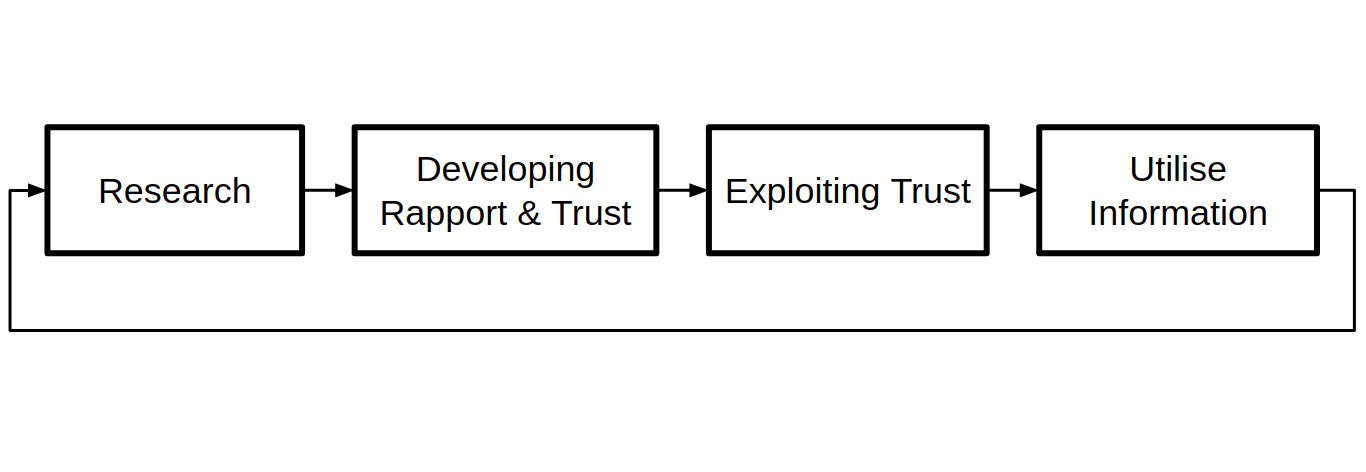

Figure 3 illustrates the four steps that constitute the model.

|

|---|

In the first step information about the target is collected. Before the attack is executed, as much knowledge as possible about the target should be gathered.

In the next phase, trust and a relationship is built up with the target.

This is achieved, for instance through helpfulness or a change of identity to an authority figure.

Next, trust is exploited either by asking for confidential information, demanding for a certain action, or the target seeking help from the attacker.

Finally, the outcome of the previous step is utilized to achieve the goal or to continue.

The advantage of this model is its simplicity. However, the disadvantages are that the respective steps are not transparent and do not allow recommendations for protective measures[1][8][2].

Model 2: Cycle of deception

This model consists of three cycles. The attack cycle, defense cycle and victim cycle.

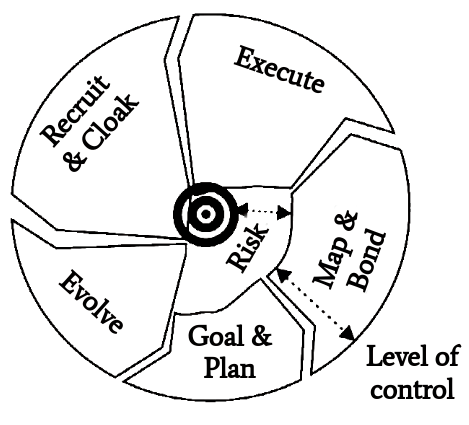

The attack cycle depicted in figure 4 starts with the Goal & Plan phase.

|

|---|

The attacker intends to serve a purpose. So criminal knowledge is beneficial. Classical characteristics are methods, motive, opportunity and means.

Map & Bond is about gathering information through social engineering techniques. This could be Dumpster Diving, desktop hacking or making false friends.

The Execute step represents illegal actions e.g. hacking, sending malware or asking for credentials.

Recruit & Cloak tries to mask illegal activity.

Finally, a retrospective is presented at the Evolve step. It evaluates whether the process has developed correctly or not. Had the attack been stopped or should have been turned into a simpler attack.

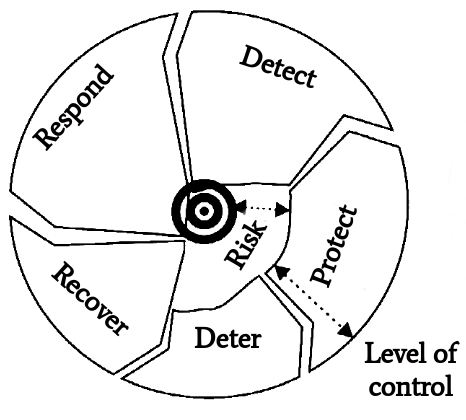

In the defense cycle displayed in figure 5, the first step is Deter.

|

|---|

It concerns the deterrence of attackers by a mature company public order or incident reports to the police.

Next comes Protect. This refers to the fact that little sensitive data should be made accessible to the outside world. Employees could be informed about the risks and methods of attackers.

In the Detect step, the network traffic is monitored in order to search for sensitive data.

Respond is about reporting social engineering incidents without social or professional stigmata. In addition, by raising employee awareness, it is possible to react to ongoing attacks.

After all, you can recover from an attack in the Recovery step. As well as learn from this when knowing the value of your data, reporting attacks and creating well thought-out company policies.

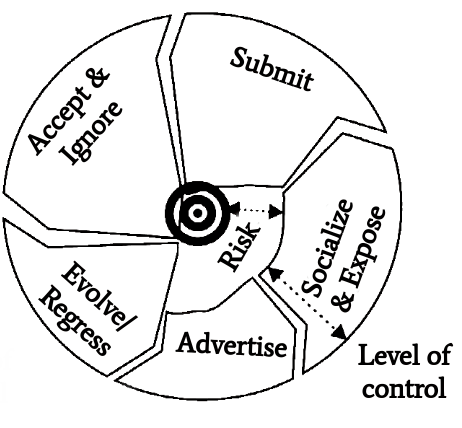

A common error in the analysis of attacks is an excessive focus on the attacker. Many incidents can be prevented more easily by focusing on the victim, hence the victim cycle shown in Figure 6.

|

|---|

It begins with the Advertise phase. By having a certain value as an employee and making it known, attackers get attentive.

This is followed by the Socialize & Expose phase. The victim and attacker get to know each other. This forms the basis for deception.

In the Submit step, the actual attack is executed. The victim complies with the attacker and reveals the confidential information.

At this point, the victim can then either accept or ignore the procedure. Typically, the victim tells itself that the incident wasn’t harmful or ignores it deliberately.

Either the victim evolves from this and gets more skeptical, or it regresses and becomes an even more naive victim.

All cycles have an additional level of control. This contrasts the attacker’s target with the route to it, which is also indicated in the illustrations of the cycles. The way the goal is achieved is by increasing the level of control. However, there is always a certain risk until then.

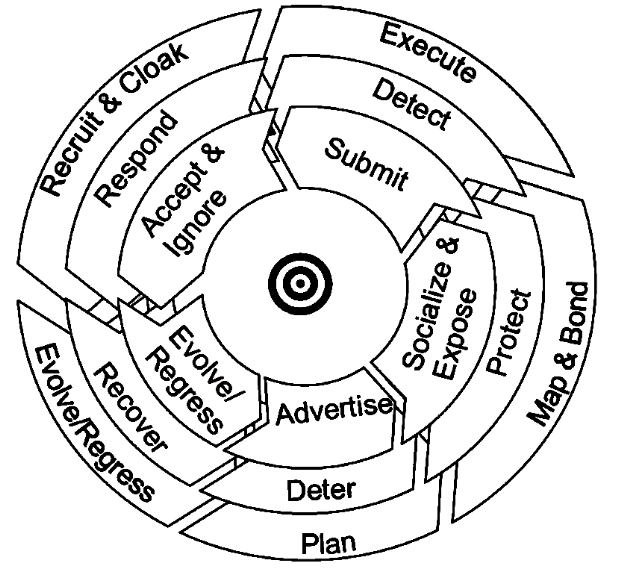

The integration of all previous cycles yields the so-called cycle of deception depicted in figure 7.

|

|---|

By merging the cycles, a number of observations can be made.

For the attacker cycle, the first three steps ensure a unique success of the attack. The Recruit & Cloak and Evolve steps must also be completed in order to perform future attacks.

In the defense cycle, only one step must be sufficient to stop the attack.

Therefore, there are many factors for the failure of an attack, e.g. if no plan or method for the attack can be found, cloaking the attack is not possible, the attacker judges the attack itself to be unfeasible or no information on the potential victim can be obtained.

In the victim cycle, each step must be passed through to perform a successful attack.

If you want to prevent attacks, you need countermeasures for the first three steps

For aftercare, countermeasures must be established for steps Recruit & Cloak and Evolve.

In addition, there are further elements of control, time or impact. The impact describes how obvious the attack is for the victim and the organization

The goal of the attacker is to achieve a high level of control as fast as possible and with little impact. Additionally, several minor attacks can be performed to implement a step in the main attack cycle.

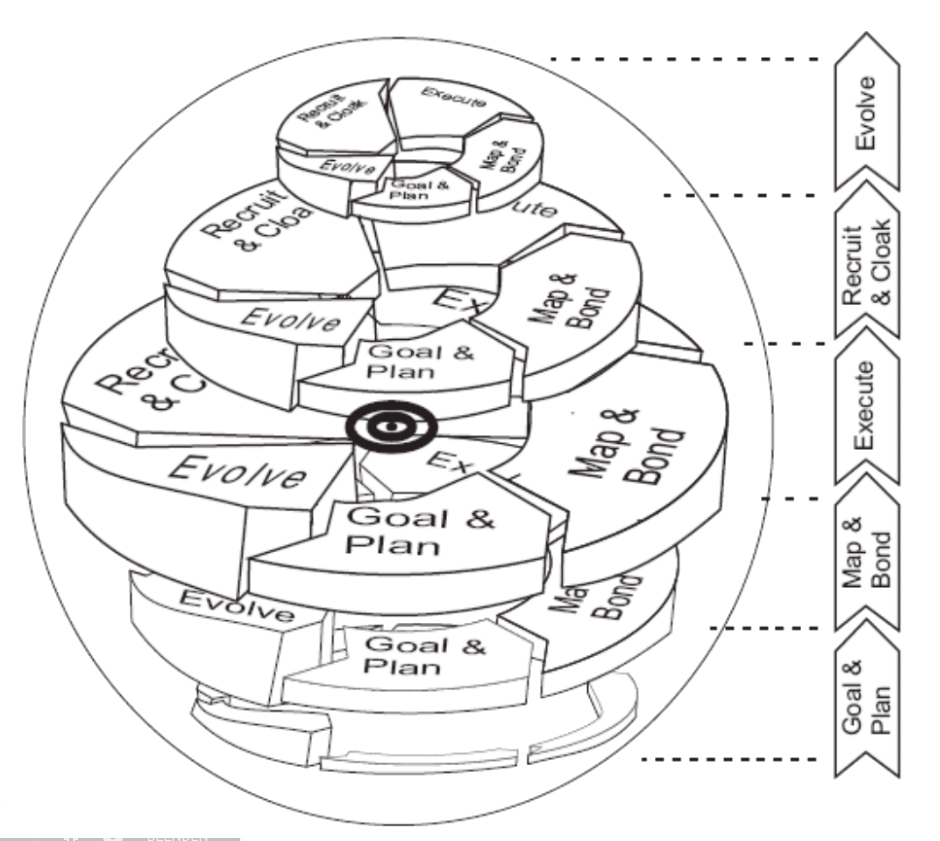

If these factors are added, this leads to a spherical view illustrated in figure 8.

|

|---|

Beneficial is that the model contains the attacker, the victim, and the defense components. It enables the development of protection strategies. It is modular, so the focus can be placed on individual steps. It is the basis for a potential AI bot suitable for training people. Thus penetration tests are possible.

However, a disadvantage is the high complexity[2].

Model 3: Ontological model

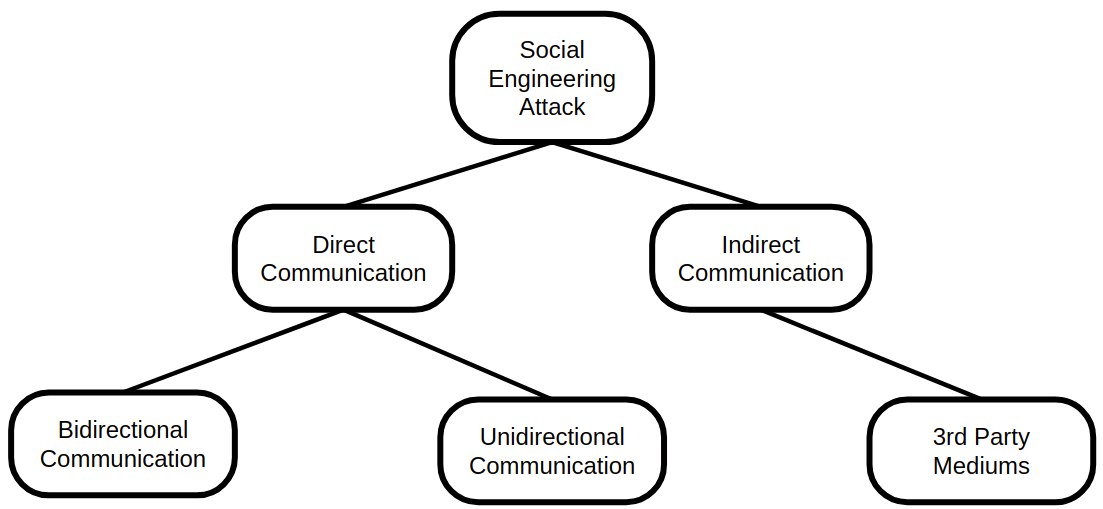

With this model, the attack is first classified. Figure 9 illustrates the attack classification tree.

|

|---|

First, a social engineering attack is divided into direct communication and indirect communication.

Direct communication is further divided into bidirectional communication and unidirectional communication. Examples of bidirectional communication are conversations via emails or messengers. So two people are conversing. In unidirectional communication there are no answers. A classic illustration of this is phishing emails or phishing via social networks.

In indirect communication, there is actually no interaction between the attacker and target. Communication happens via a third party medium, e.g. a compromised USB stick.

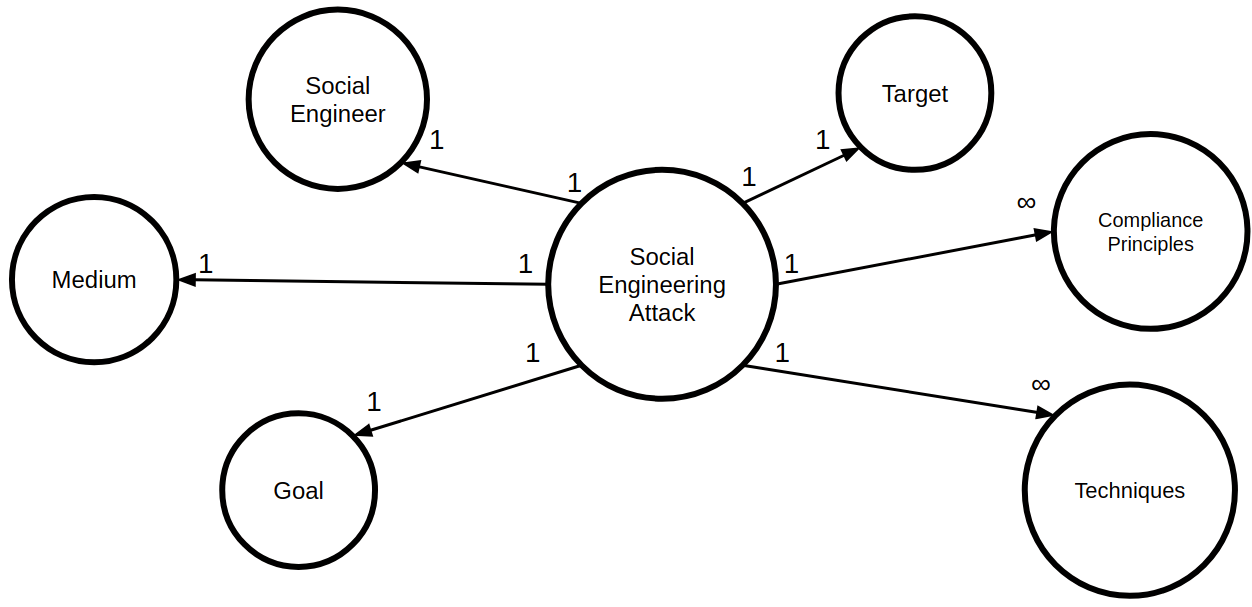

Now a role structure of a social engineering attack is indicated in figure 10.

|

|---|

A social engineer is an individual or a group.

The target is also an individual, a group, or company.

One or more compliance principles are also included. These are the pretexts why the target fulfills the attackers request, which were mentioned in the section Principles & Techniques.

Part of a social engineering attack is the communication medium such as email, face to face or telephone.

The most important point is the goal of the attack. Possible goals can be financial gain, unauthorized access or service disruption.

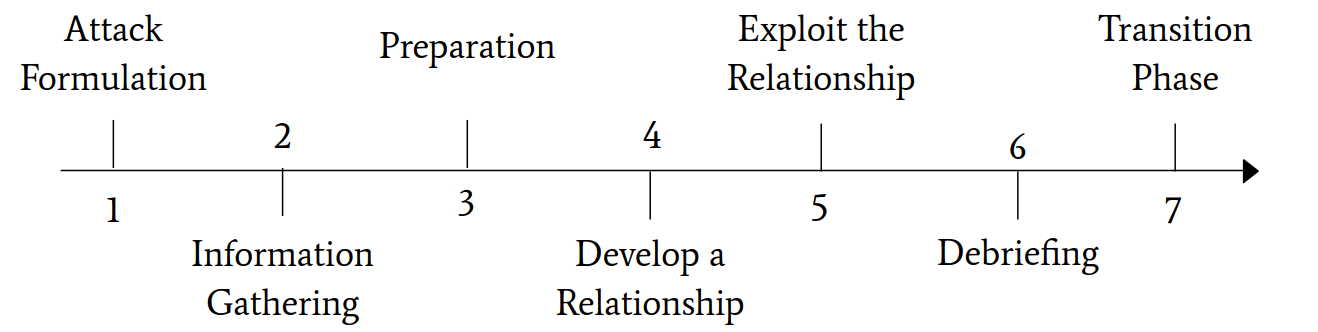

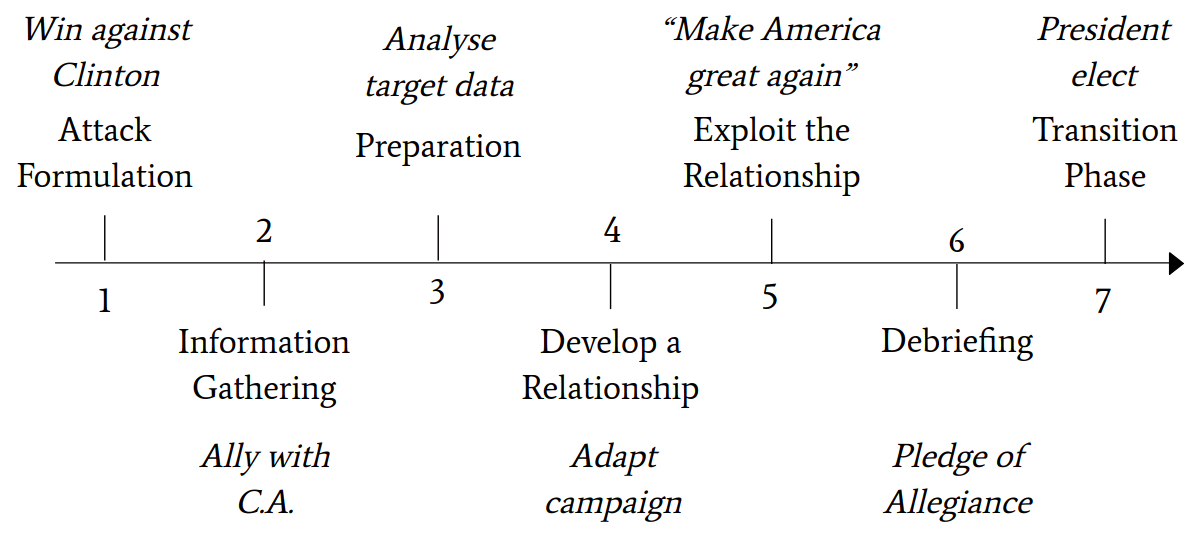

This role structure enables a process flow to be defined. It is implemented in this framework shown in figure 11. The figure refers to the aforementioned role structure and outlines the course of the attack.

|

|---|

- In the step Attack Formulation, the goal of the attack and the best possible target is defined

- Information Gathering means collecting information about the goal and the target.

- Preparation implies that the information collected is processed to develop an attack vector.

- Develop a Relationship is the initial building of communication through the information collected and the establishment of trust.

- Exploit the Relationship involves first laying the foundation to elicit the information by analyzing the emotional states of the target. Then the attacker figures out how to manipulate them to execute the attack.

- In the Debriefing step, the target must be returned to a normal emotional state after the exploit.

- Finally, in the Transition Phase, there are two options. Either going back to the Information Gathering step, or if the goal is fulfilled, canceling the process.

So, social engineering attacks involve certain recurring patterns. The three models introduced attempt to address them, with different approaches and varying degrees of detail. All three models have their advantages and disadvantages. The Mitnick attack cycle is simple, possibly so much, it is inaccurate for different scenarios. The cycle of deception may be ideal as basis for building an AI to train people to prevent attacks. On the other hand, it is too sophisticated for many other use cases such as a one-off employee training course. The ontological model is a promising intermediate step due to the separation into a role structure and a precisely defined procedure[8][3].

Social media

In this section, the influence of social media on social engineering is analyzed based on the well-known 58th US presidential election in 2016. In addition, this incident is taken to examine the reliability of the promising ontological model.

Now a brief overview of the events during the election campaign.

- After Donald Trump won the primary, he was far behind Clinton. Therefore, the goal was formulated to beat Clinton.

- For scientific purposes, the company Global Science Research has gained access to the Facebook API. This gave them access to data from over 50 million users.

Cambridge Analytica is a company which collects and analyses data on a large scale. Illegally, this data was submitted to Cambridge Analytica by Global Science Research. Next, Cambridge Analytica was involved in the campaign for Donald Trump due to this data. - Subsequently, the data of potential target groups were analyzed in order to adjust the election campaign.

- During this canvassing process, volunteers were able to get the most in-depth information about potential voters by means of an app. Cambridge Analytica has also set up a large system of websites and blogs to provide voters with supposedly independent but tailored information.

- This manipulated the election and exploited the relationship to the voters.

The statement “Make America great again” is thus a plea to the American voters to comply with Trump’s inquiry. - The Pledge of Allegiance calmed most voters.

- Nevertheless, Trump has achieved his goal of becoming president[9][10][11][12].

Interestingly, in the meantime, Russian hacking of Clinton’s emails took place. It focused on Clinton’s partnership with Al-Qaida to assassinate Gaddafi as well as arming well-known Al-Qaida terrorists in Libya. In addition, this incident harmed Clinton’s reputation[13][14][15].

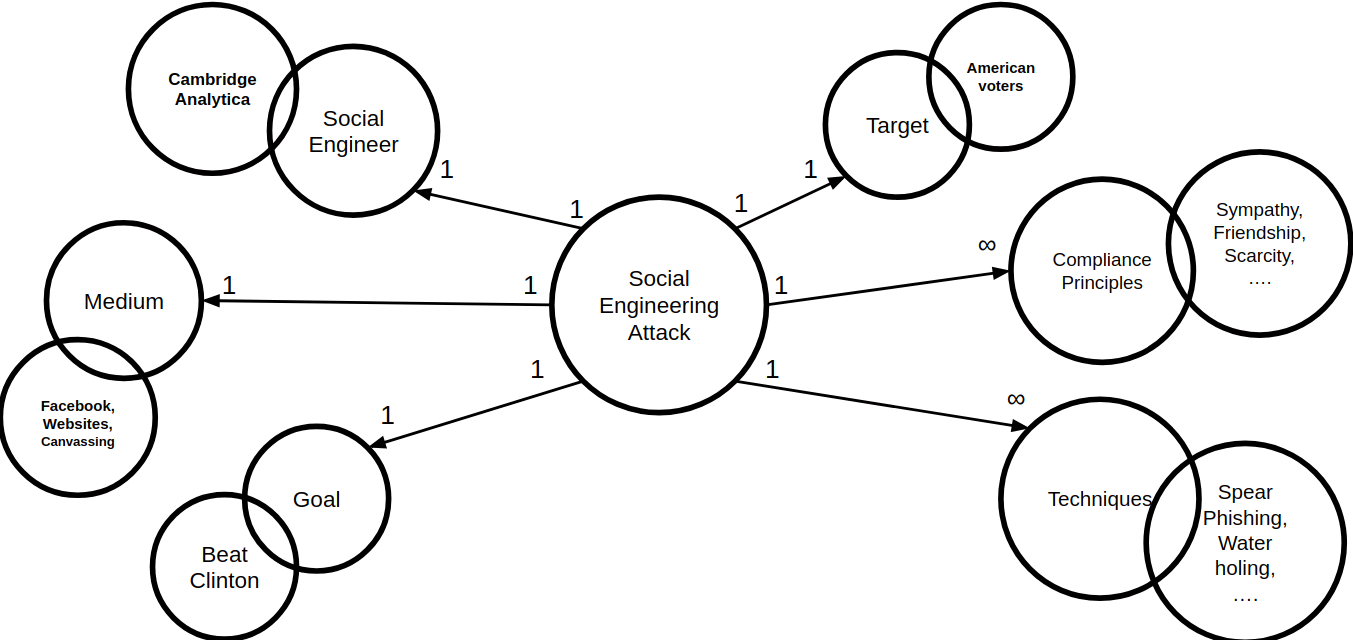

If this incident is now projected onto our ontological model, the result is the role structure as illustrated in Figure 12.

|

|---|

Obviously, Donald Trump was not the social engineer himself. Thanks to his campaign team and his son-in-law, his attention was drawn to the actual social engineering organization Cambridge Analytica.

The goal to win against Clinton was achieved by influencing voters through the media canvassing, Facebook and other websites.

A special kind of spear phishing could be carried out during canvassing. With the help of an app provided by Cambridge Analytica, target groups could already be filtered out during home visits. Thus the targets could be made compliant mainly by means of the Compliance Principles sympathy and friendship.

The procedure outlined above is projected onto the framework of the ontological model in figure 13.

|

|---|

It is evident at this point the previously described procedure can be assigned reliably and meaningfully to the model step by step.

This incident indisputably demonstrates an enormous amount of information about its users in social networks. Since social engineers initially seek to obtain as much information as possible about their victims, social networks are a convenient starting point.

Therefore, social networks may pose a serious threat to companies and individuals in terms of social engineering attacks.

Conclusion

To conclude, a brief examination is given of opportunities to prevent social engineering attacks for both companies and individuals. Thereupon it is discussed whether and how to recover from a social engineering attack.

Concerning precautions for social engineering attacks, a company may establish various aspects. In the company it is important to create a basis of trust among employees, otherwise mistrust may arise. Social engineers might exploit this. In addition, security protocols and policies can be applied. Rules such as verifying identities before information is revealed may be included. Disabling macros in Microsoft products can also be beneficial, as many Trojans and other malware are installed in this manner. Blocking USB device connections also prevents malware from entering corporate networks. Also, proper waste management can help prevent Dumpster Diving attacks.

An additional important factor is the continuous training of employees and the realization of penetration tests. In this way, awareness of social engineering attacks is constantly maintained. Employees therefore understand how to cope with such a situation. This allows a social engineering attack to be detected and stopped in no time.

Private persons are encouraged to do the similar, but the mindset “Security first” is particularly important here. Especially in social networks, the information disclosed needs to be questioned more frequently. Google yourself to determine what information is available. If so, it is worth asking yourself whether this information is supposed to be visible. By focusing more on privacy settings, the information available by default is revealed. Thereupon it is possible to adapt them according to your own expectations.

Now, it is clear to see as individuals as well as companies we can take many different countermeasures to prevent social engineering attacks. But at what cost and how to recover if such an attack has already occurred at a company?

First, there is no silver bullet applicable for everyone. So, when an attack has already taken place, several points should be identified. These questions are worth raising.

- What are the most relevant goals of the organization or of me?

- Is there a favored target group that was preferably attacked? If so, why?

- Which communication media are used? Which ones have been exploited? How can this be avoided?

- What are objects worth protecting which are particularly important? How can they be protected?

After these questions have been analyzed and answered precisely in the form of a retrospective of the social engineering attack, further precautions can be taken. Possibly internal company misunderstandings are clarified, new security protocols are established and the trust of the employees is slowly rebuilt. It is particularly important that all groups of employees participate, since the company is only as strong as the weakest employee. In the best scenario, the specific attack will help to identify further possible attacks. Thus, not only the current attack is overcome, but also future attacks of a different kind can be prevented. However, the process of recovering from a social engineering attack is very difficult and fragile. Sensitive issues such as the question of guilt lie in human nature, but only inhibit recovery.

As a final statement, it is summarized as particularly difficult to find a balance between precaution against social engineering and performance. If too many precautions are taken against social engineering attacks, employees may no longer trust each other. Otherwise, the risk of social engineering attacks is too serious. Nevertheless, relatively significant progress can be achieved with awareness alone. However, this awareness must be constantly renewed, otherwise the effect will cease.

References

1. The Art of Deception: Controlling the Human Element of Security, Kevin D. Mitnick, William L. Simon, Steve Wozniak (Foreword by), 2002

2. The Cycle of Deception – A Model of Social Engineering

Attacks, Defences and Victims, M. Nohlberg and S. Kowalski, 2008

3. Social Engineering Attack Framework, Francois Mouton, Mercia M. Malan, Louise Leenen and H.S. Venter, 2014

4. Hacking the Human: Social Engineering Techniques and Security

Countermeasures, Ian Mann, 2008

5. The Official Social Engineering Portal, https://www.social-engineer.org/, (latest access 15.08.2018)

6. Influence: Science and Practice, Robert B. Cialdini, 2001 https://faculty.iiit.ac.in/~bipin/files/Dawkins/July/Robert%20Cialdini%20-%20Influence%252C%20Science%20and%20Practice.pdf, (latest access 15.08.2018)

7. Social Engineering (security)

https://en.wikipedia.org/wiki/Social_engineering_(security), (latest access 15.08.2018)

8. Towards an Ontological Model Defining the Social Engineering Domain, Francois Mouton, Louise Leenen, Mercia Malan, H. Venter, https://hal.inria.fr/hal-01383064/document, (latest access 15.08.2018)

9. Faq: Was Wir Über Den Skandal Um Facebook Und Cambridge Analytica Wissen [update]

Ingo Dachwitz – https://netzpolitik.org/2018/cambridge-analytica-was-wir-ueber-das-groesste-datenleck-in-der-geschichte-von-facebook-wissen/, (latest access 15.08.2018)

10. Social Engineering To the Extreme: the Cambridge Analytica Case

Administrator – https://www.dogana-project.eu/index.php/social-engineering-blog/11-social-engineering/92-cambridge-analytica, (latest access 15.08.2018)

11. Cambridge Analytica: „unsere Daten Haben Trumps Strategie Bestimmt” – Welt

https://www.welt.de/politik/ausland/article174785094/Cambridge-Analytica-Unsere-Daten-haben-Trumps-Strategie-bestimmt.html, (latest access 15.08.2018)

12. Cambridge Analytica Execs Boast Of Role in Getting Donald Trump Elected

Emma Graham-Harrison-Carole Cadwalladr – https://www.theguardian.com/uk-news/2018/mar/20/cambridge-analytica-execs-boast-of-role-in-getting-trump-elected, (latest access 15.08.2018)

13. Russia Hackers Discussed Getting Clinton Emails To Michael Flynn – Report

Julian Borger – https://www.theguardian.com/us-news/2017/jun/30/russia-hackers-clinton-emails-mike-flynn, (latest access 15.08.2018)

14. Hillary-clinton-mails – Die Dunklen Machenschaften Der H. Clinton

https://www.freitag.de/autoren/gela/die-dunklen-machenschaften-der-h-clinton, (latest access 15.08.2018)

15. Hillary Clinton Supplied Cash, Weapons, Tanks, Training To Al-qaeda To Kill Gaddafi & Weaponize “isis” in Syria – True Pundit

https://truepundit.com/hillary-clinton-supplied-cash-weapons-tanks-training-to-al-qaeda-to-kill-gaddafi-weaponize-isis-in-syria/, (latest access 15.08.2018)

16. Survey results, https://de.statista.com/statistik/daten/studie/499324/umfrage/vorfaelle-von-cybercrime-in-unternehmen-weltweit/, (latest access 15.08.2018)

Leave a Reply

You must be logged in to post a comment.