The safety culture of an organization is the key indication of its performance related to safety. It incorporates the visible rules, norms and practices as well as the implicit factors such as values, beliefs and assumptions. That is why the safety culture reflects “the way we do things around here” which is the most precise definition. Safety is a universal topic since we pursue it permanently and every action is safety related. To improve safety, we first need to understand the organization’s unique safety culture before we can derive tailored actions. This post covers the basic theoretical background of a safety culture and focuses and two central components: just and learning culture. The resulting principles can increase the resistance of an organization towards its operational hazards but only if they are adapted to the unique situation. There is no generally applicable step-by-step manual on how to implement a safety culture.

Throughout this post we try to find answers to the following research questions: How can we balance safety and accountability? Why do we need accountability? How can we increase safety in even more complex systems? Is human error a valid explanation? How can implement changes that sustain? How can we assess safety culture? I am aware that I cannot provide definitive answers to these questions but I belief that putting them into the center of research will fuel the debate and finally yield new insights.

Safety Culture

To demystify the term safety culture, it is helpful to shed some light on its origin, definitions and its surrounding context namely the organizational culture. We will explore fundamental principles and learn why the culture of an organization itself is hard to change. Finally, we illustrate the elements of safety culture and their mutual relationship.

Motivation and origin

To begin with, let’s establish the main reasons why safety culture is a relevant. First, safety should be universal. Our deepest instincts strive for safety hence we expect our daily life to be safe. Nonetheless, many people suffered and still suffer from workplace related accidents or catastrophes throughout history. Despite all this, safety is often perceived as a source of unnecessary costs, an impairment of productivity and generally as bothersome instead of an investment with a positive dividend. The safety culture of an organization has been shown to be a reliable predictor of the organization’s performance concerning safety. It is often described as the difference between a safe organization and an accident waiting to happen [2,3].

In the last fifty years many tragedies revealed that we do not have total control over the complex systems we have built and evolved over time. We term our technology systems complex because we cannot anticipate every consequence an action entails. Until the 1970s three reasons were predominantly used to explain disasters: fate, human and technical error. Especially after the Chernobyl catastrophe in 1986 the focus shifted from solely technical failures towards organizational, managerial and human factors to explain the breakdown of safety systems. The INSAG Summary Report on the Post-Accident Review Meeting on the Chernobyl Accident recognizes the importance of the organizational culture as a cause of the accident. The same report also introduces the term safety culture. Every subsequent major accident fostered the concept safety culture because it enhances our knowledge of the factors that render organizations vulnerable to failure. The general assumption emerged that accidents are not just caused by human or technical failure. Since then, common organizational policies and standards that have predated incidents were identified. Nowadays, we have accepted the concept of safety culture as the key instrument to understand disasters and improve safety in the future [4,5,6,7,13].

The need for a safety culture becomes more tangible when we look at numerous safety management programs that are successfully ignored on a daily basis. Ask yourself: Do you follow every safety related rule in your everyday life? The discrepancy between formally defined rules, i.e. the safety management system (SMS), and the degree those are followed indicates that we need to integrate the gist of those rules into our values and principles. Our culture ultimately controls our actions and thus reflects the way we address safety. Consequently, it is possible to have an effective safety culture without a formal SMS but not reverse: it is impossible to have a working SMS without a refined safety culture [15].

Organizational Culture

An organization’s safety culture is embedded in its corporate or organizational culture. Therefore, we need to understand its concept and the resulting power. The corporate culture is comprised of everything that determines the unique environment of an organization. It characterizes the organization and is often referred to as its DNA. On the one hand, the corporate culture encompasses visible elements such as rules, norms, strategy and practices as well as external factors like the location and industry. On the other hand, there are many potent hidden forces, for instance values, beliefs, assumptions and experiences. Based on the unique culture actions within an organization are either considered valid or inappropriate. Consequently, the corporate culture regulates every process and interaction in the organization. The saying “culture is what people do when no is looking” summarizes the gist tellingly [8,9,11].

So far, we have acknowledged the power of an organization’s culture and its crucial role in every change process. But why can’t we just ingrain safety into the culture? Changing the corporate culture is one of the most difficult challenges. Torben Rick provides a sound explanation [10]:

“An organization’s culture reflects its deepest values and beliefs. Trying to change it can call into questions everything the organizations holds dear, often without that conscious intention.”

He further asserts that the elements build together a mutually reinforcing system that prevents any ambition to change it. As a result, the organizational culture cannot be designed but it evolves over time. Nonetheless, structured change processes can help to establish boundaries to guide the evolution [3,11].

Definition & Main principle

Numerous definitions of the term safety culture were developed since its introduction by the International Atomic Energy Agency (IAEA) in 1986. The most widely used characterization is provided by the third report of the Advisory Committee on the Safety of Nuclear Installations (ACSNI) in 1993 [12]:

“The safety culture of an organisation is the product of individual and group values, attitudes, perceptions, competencies, and patterns of behaviour that determine the commitment to, and the style and proficiency of, an organisation’s health and safety management. Organisations with a positive safety culture are characterised by communications founded on mutual trust, by shared perceptions of the importance of safety and by confidence in the efficacy of preventive measures.”

This definition highlights the aforementioned difference between the safety management of an organization and its safety culture which reflects the implicit factors and the resulting visible behavior. In the second part the authors provide key factors for a safety culture such as trust and a shared view regarding the importance of safety. Before we further explore the essentials of a safety culture looking at James Reason’s characterization will help us recognize its main principle [14]:

“An ideal safety culture is the engine that drives the system towards the goal of sustaining the maximum resistance towards its operational hazards, regardless of the leadership’s personality or current commercial concerns”

He states that a safety culture increases the reliability of an organization. In addition, Reason emphasizes the importance of culture to survive temporary influences such as change in personnel or economic interests.

Characteristics

Aiming to establish a common understanding of safety culture and to enable its assessment necessitates a more granular elaboration of its components. The ECAST Safety Culture Framework developed by the Dutch National Aerospace Laboratory defines six main characteristics of safety culture [15]:

The first dimension addresses the commitment of every level within the organization towards safety and the shared perception of the importance of safety. An honest engagement of the leadership is required to set an example and spread motivation among the workforce. Naturally, safety must be granted top priority.

The behavior of every level of the organization related to enhance and maintain safety represents the second characteristic. Actions that improve safety should be acknowledged and investments of all kinds should be taken to advance the level of safety. Consequently, safety related expenses should not be perceived as waste of resources but as an attempt to improve the organization.

The third key element is concerned with the employee’s awareness of the risks for themselves and others imposed by the workflows and procedures within the organization. This requires considerable attention of the workforce towards safety related concerns.

The willingness and capability of the organization to learn from previous experiences forms another dimension. A high degree of adaptability entails that the organization conducts necessary actions to improve safety despite obstructions of all kinds.

The fifth characteristic reflects the proper distribution of information to the right people. An appropriate form of communication mitigates the risk of misconceptions. The workforce should be encouraged to report safety issues to establish a reliable source of information.

The justness represents the last dimension and captures the response to safe and unsafe behavior in the form of rewards or discouragement.

Assessing safety culture

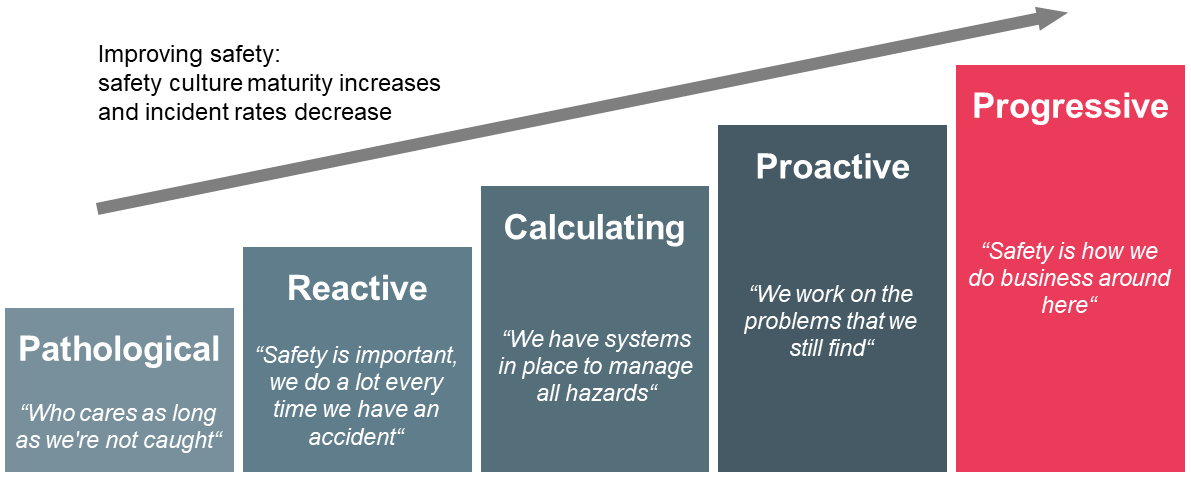

The principle components we identified in the previous section enable the assessment of the maturity of a safety culture. For the sake of brevity of this post we skip the elaboration of low-level indicators derived from the six high-level characteristics as well as the discussion of concrete methods to conduct the assessment, e.g. questionnaire or interviews. Upon completing the assessment, we can employ the safety culture maturity model formulated by Parker et al in 2006 to assess the advancement. The framework defines five ascending levels describing the maturity of safety culture as depicted in Figure 1. As the maturity increases with every level the incidents drop because the safety management proves to be more and more effective [15,16,17,18]:

The everyday life in organizations classified as pathological is characterized by frequent accidents. They are perceived as unavoidable and hence as part of the job. Besides, safety is not recognized as a major risk to the business and the workforce is barely interested in hazard control. There are no or minimal investments in risk mitigation, safety performance is not monitored, and the production line supersedes safety-related topics.

Organizations in the subsequent stage exhibit reactive behavior after accidents occured. The belief remains that incidents result from unsafe behavior of the employees. The actions taken after accidents are mostly temporary and have a disciplinary character in form of punishment. The organization defines safety merely as the compliance to rules and procedures.

The incident rate continues to decline in the third stage because the organization begins to involve the workforce in decisions related to health and safety. Moreover, essential systems to manage hazards are put in place and the leadership notices that there is a broad spectrum of reasons that explain accidents. The priority of safety rises and sometimes even exceeds productivity. In addition, there is an active monitoring of safety performance including an effective usage of the available data.

The fourth level is characterized by the shared belief among the workforce that health and safety is crucial to the moral and economic development of the organization. There is evidence of substantial efforts to proactively address and prevent incidents and the employees accept personal responsibility for their own and their colleague’s safety. Every member of the organization is aware that accidents eventually originate from management decisions.

The primary features of the last stage are the embedding of safety in the core values of the organization and the continuous ambition to improve the hazard management. As a result, safety occupies the number one priority and lessons learnt fuel the ongoing advancement. All members of the organization are connected by their strong confidence in the hazard control program and they are convinced that health and safety is a key aspect of their employment.

Elements of safety culture

So far, we have elaborated on the six principal characteristics of safety culture and applied them to assess the advancement of an organization’s safety culture. We complete the basics of safety culture by exploring the key elements in form of actionable concepts that emerge from those characteristics. Figure 2 illustrates the components and their causal relation.

Safety culture is founded on the organizational culture which must ingrain safety as priority number one and must yield a corresponding vision and set of policies. Thereupon, Reason identifies five crucial components starting with just culture which ensures appropriate responses to incidents with the intention to balance safety and accountability. It is imperative that such responses are perceived as just in order to create an atmosphere of trust. This means employees feel save to report information because they are certain that the provided insights are not used against themselves or to harm colleagues. On the basis of a just culture, a reporting culture actively encourages the workforce to provide information. Gathering sufficient data that reflects the current situation especially on the shop floor is an absolute requisite for an organization to reach decisions based on facts instead of subjective instincts. Naturally, an effective reporting culture requires honest and active participation of the workforce and encompasses a practical system to gather and process the collected data [3,19].

Apparently, solely collecting facts is not enough to advance safety in the organization. Hence, a learning culture is required to draw appropriate conclusions and to translate data into actionable steps. Every serious ambition to improve safety relies on actions tailored to the organization’s unique environment instead of universal advice. Putting those steps into practice necessitates a flexible culture meaning that the organization is willing and capable to implement changes lastingly despite various impediments. Finally, an informed culture can be established. The main characteristics are that the organization possesses contemporary knowledge of all factors that determine safety and that decision are reached based on facts. This entails an appropriate risk management. Reason states an informed culture is an equivalent to safety culture [3,19].

Before we explore the concepts of a just and learning culture I’d like to emphasize the importance of this model because it densely illustrates the scope of safety culture and the causal dependencies of its core elements.

Just Culture

We are now equipped to become acquainted with the essentials of just culture. Therefore, we learn why it is a relevant topic, establish basic principles and finally address the question whether all mistakes are equal.

Motivation

We constantly operate in complex systems and due to their nature we cannot anticipate all consequences an action entails. Hence, mistakes that result in incidents are unavoidable. In the aftermath of accidents, we expect experts to provide a comprehensible explanation of the events. Additionally, we want to differentiate between honest mistakes and culpable behavior. This is where the concept of just culture enters the stage because it can be illusory to draw a line between acceptable and unacceptable and objectively decide whether an action crossed that line [20].

Calls for accountability are essential to an open and functioning society and they are deeply ingrained in human relationships. Basically, they express our concern that a person or an organization takes its problems seriously. Unfortunately, merely responding to calls for accountability will likely deteriorate safety and impede justice. This is caused by responses to incidents that are perceived as unjust which can set a vicious cycle in motion: After accidents, human error is often deemed to be the root cause. Thus, management quickly searches for a scapegoat to be publicly named and blamed. As a result, the relationship between management and frontline workers deteriorates. The reduced trust impairs the bidirectional exchange of information and the leadership imposes more approvals, micromanagement and bureaucracy. This fosters professional secrecy as well as self-protection among the workforce, hence safety-related issues are probably not reported. Consequently, problems remain hidden until the next incident occurs, and the cycle continues. In the end, safety suffers the most [20].

The vicious cycle of unjust responses demonstrates that safety is more about relationships and less about bad performance because the perception as unjust is caused by poor relationships. Since justice and culture are infeasible to control we can still improve the relationships to advance safety. Finally, please remember that just culture is a key element to expedite a safety culture because it creates a safe environment for people to report safety-related information [20].

Before we explore the basics of just culture, thinking about why we even blame people in the first place creates useful insights. Sidney Dekker offers a sound explanation [20]:

“Selecting a scapegoat for an accident or incident may be the easy price we pay for our illusion that we actually have control over our risky technologies”

According to Dekker, the alternative to blaming people because we lost control over our complex systems would be to admit our loss of control and that failure in complex system is caused by emergent behavior of numerous interacting components. This entails the acknowledgement of failure as never entirely avoidable. Besides, uncertainty is one of the most distressing states for humans. Thus, being afraid of failure and thr uncertainty about triggers of failure is worse than wrongly accusing a person of misbehavior [20].

Basics, challenges & implications

The ultimate intention of just culture is the creation of an environment which balances safety and accountability constructively. The calls for accountability must not sacrifice the advancement of safety. A just culture favors forward accountability in form of a learning culture over backward accountability, i.e. indictments and trials. Hence, improving safety by applying lessons learnt is more lasting than legal protection. In essence, just culture aims to yield responses to incidents that are seen as just [20].

The first principle addresses the pursuit to create full transparency while not tolerating everything. This necessitates a climate which encourages the workforce to report proactively and honestly. Information provided in good faith must never be used directly against the messenger or other members of the organization. To be clear, this does not prohibit corrective actions or forces us to tolerate even willful violations. That is why we want to distinguish honest mistakes from culpable behavior. Although drawing a simple line that separates acceptable from unacceptable behavior is appealing this concept entails serious consequences. The crux lies in the assumption that we can objectively identify culpable actions. For instance, even defining negligence is highly complex not to mention recognizing such behavior or characterizing honest mistakes. We must accept that drawing a line can be simple but objectively judging on which side an action fall is infeasible. The main reason is that our judgement highly depends on how the story is told and by whom [20].

There are two major implications: First, it matters less where the line goes, but the important characteristic is who draws the line. That is why we must clarify the legitimacy of the entity that judges over behavior. A strong indicator of legitimacy is expert knowledge. Secondly, it is imperative to collect multiple accounts to assemble a of version of what happened that resembles the truth sufficiently. Besides, it is advisable to always ask whose perspective is missing. Keep in mind that the view of accused persons is the easiest to discredit [20].

The second principle is concerned with the perception of human error: Since we assume that people want to do a good job, merely attributing accidents to human failure is pathetic. It is essential to treat human error as symptom and not as cause. This principle can be easily followed by asking what is responsible, not who. We are required to comprehend why certain actions made sense for the operator at that time under those circumstances, e.g. knowledge, time pressure, available tools, stress etc. This is imperative to prevent reoccurrence because these actions will likely make sense for another person in the situation as well [20].

As a third principle, we need to foster personal accountability because empowering people to design their work environment increases their readiness to assume responsibility. Please keep in mind, that holding people accountable is not equivalent to accusing them [20].

Are all mistakes equal?

We have already established that our judgement over behavior depends on the way the story is told and by whom. It is important to know that at least two other factors impact our perception as well. Both address the question whether all mistakes are equal. First, there is the distinction between technical and normative errors. A technical mistake is present if the operator performs his job conscientiously but lacks certain skills to handle the situation. We are more likely to forgive technical errors because we are aware that the operator’s abilities have a natural limit and we concede that learning a profession inevitably entails mistakes. In contrast, characteristics of normative errors are the assumption of a role and the undiligent performance by an operator. These are less forgivable, and we tend to demand more serious consequences. For example, a rough landing of a plane under special circumstances is classified as a technical error because the pilot remains in his role. If a co-pilot does not wake up his sleeping polit during an emergency despite opposite orders and the co-pilot handles the situation himself inappropriately he makes a normative mistake [20].

The second factor that highly influences our perception of mistakes is hindsight bias. Basically, we judge actions differently if we know the outcome. As a result, bad outcomes of an action fuel the search for mistakes in hindsight and we demand more serious consequences. Thus, the same process which leads to two different outcomes is perceived differently. Unfortunately, there is nothing we can do to eliminate the hindsight bias but to always remind ourselves that we are all affected by this phenomenon and that we must understand the circumstances and situation of the operator at that time [20].

Learning Culture

With a working just culture in place we can translate the raw data provided by the workforce into value insights and derive actionable steps to improve safety. In accordance with just culture the learning culture is based on the assumption that failure is inevitable. It embraces failure because incidents are a highly valuable source of information which can be leveraged to increase resilience. Dr. Steven Spear confirms the importance to continuously adapt to new situations that result from incidents [21]:

“For such an organization, responding to crises is not idiosyncratic work. It is something that is done all the time. It is this responsiveness that is their source of reliability”

An effective learning culture enables an informed culture. Moreover, according to Peter Senge learning faster than the competition is an organization’s only lasting competitive advantage [22]. There is no better opportunity to learn from than failure.

Methods

In the following we will briefly explore two methods to maximize learning in an organization. Let’s begin with blameless post mortems. These meetings are conducted in retrospective meaning shortly after an incident occurred. The entire team gathers and tries to make sense of what happened. Therefore, the involved individuals give a detailed account of their actions, observed effects, assumptions and expectations. Additionally, the team assembles a timeline of the events. The principle objective is to comprehend the decisions reached and understand why these made sense to the involved persons. Blameless post mortems deeply ingrain the principles of just culture because they focus on situational aspects and circumstances instead of accusing colleagues. This is where the attribute blameless takes effect since we conduct post mortems without fear of punishment and we don’t blame individuals for their actions. Naturally, we recall that the hindsight bias impacts our perception of behavior. Although we conduct these meeting without disciplinary consequences we assign the involved individuals to prevent the reoccurrence or at least mitigate the outcome and distribute their knowledge of this incident. This creates personal accountability and advances safety [23].

In contrast, chaos engineering is a method to prepare an organization for inevitable incidents. The idea is to actively inject failures into critical systems. Thereby, an organization can simulate errors and rehearse the appropriate incident response. This allows to learn how real systems fail naturally but in a controlled manner. Usually, the organization identifies and mitigates critical components in a subsequent step. The principal advantage of chaos engineering lies in its continuous proactive application since the organization can expand its knowledge during normal work hours. Besides, constantly practicing incident response creates confidence and normally helps to reduce the consequences of real accidents in the production system [24,25].

Conclusion

As a closing thought I’d like to emphasize that safety culture is nothing you can fully implement all at once and never touch it again. To increase safety lastingly it is inevitable to anchor the necessary values in the corporate culture so that the appropriate practices can be derived and refined over time. Altering the core of an organization necessitates many iterations and a structured change management process. Finally, it is imperative that an organization develops its own safety culture which suits the unique environment and circumstances. Simply copying the safety management system of another organization will likely fail.

Related sources

Content

[1] Grissinger, M. (2014). That’s the Way We Do Things Around Here! Your Actions Speak Louder Than Words When It Comes To Patient Safety. Pharmacy and Therapeutics 39 (5), 308-344.

[2] Holland, J. (2015). Why Safety Culture Is More Important than You Think. Retrieved from https://www.triumvirate.com/blog/why-safety-culture-is-more-important-than-you-think (last access: 20.08.2018)

[3] Houston, A. (2015). Creating a Positive Safety Culture. Retrieved from https://www.icao.int/APAC/Meetings/2015%20APRAST6/06%20-%20IATA_Safety%20Culture%20from%20the%20Top%20Down.pdf (last access: 22.08.2018)

[4] Flin, R., Mearns, K., O’Connor, P. & Bryden, R. (2000). Measuring safety climate: identifying the common features. Safety Science (34), 177-192.

[5] International Nuclear Safety Advisory Group (1991). Safety Culture. Safety Series (75-INSAG-4).

[6] Gadd S., Collins, A. M. (2002). Safety Culture: A review of the literature HSL/2002/25. Health & Safety Laboratory.

[7] Reason, J. (1998). Achieving a safe culture: theory and practice. Work & Stress 12 (3), 293-306.

[8] Business Dictionary (2018). Organizational Culture. Retrieved from http://www.businessdictionary.com/definition/organizational-culture.html (last access: 25.08.2018)

[9] Health and Safety Executive (2018). Organizational culture: Why is organizational culture important? Retrieved from http://www.hse.gov.uk/humanfactors/topics/culture.htm (last access: 22.08.2018)

[10] Rick, T. (2015). Why is organizational culture change difficult? Retrieved from https://www.torbenrick.eu/blog/culture/why-is-organizational-culture-change-difficult/ (last access: 15.08.2018)

[11] Rick, T. (2016). The iceberg that sinks organizational change. Retrieved from https://www.torbenrick.eu/blog/change-management/iceberg-that-sinks-organizational-change/ (last access: 15.08.2018)

[12] ACSNI (1993). Organising for safety. Advisory Committee on the Safety of Nuclear Installations. Human Factors Study Group, Third Report. HSE Books.

[13] International Nuclear Safety Advisory Group (1986). Post-Accident Review Meeting on the Chernobyl Accident. International Atomic Energy Agency.

[14] Reason, J. (1998). Achieving a safe culture: theory and practice. Work & Stress 12 (3), 293-306.

[15] Piers, Montijn, & Balk (2009). Safety culture framework for the ECAST SMS-WG. European Strategic Safety Initiative.

[16] Parker, D., Lawrie, M. & Hudson, P. (2006). A framework for understanding the development of organisational safety culture. Safety Science 44 (6), 551-562.

[17] Behavioral Safety (2018). Strategic Safety Culture Roadmap. Retrieved from http://www.behavioral-safety.com/free-behavioral-safety-resource-center/level-2 (last access: 21.08.2018)

[18] Safety Culture Ladder (2018). Safety Culture Ladder Steps. Retrieved from http://www.veiligheidsladder.org/en/the-safety-culture-ladder/safety-culture-ladder-steps/ (last access: 25.08.2018)

[19] Reason, J. (1997). Managing the risks of organizational accidents. Ashgate.

[20] Dekker, S. (2012). Just Culture: Balancing Safety and Accountability. Ashgate.

[21] Spear, S. (2008). Chasing the Rabbit: How Market Leaders Outdistance the Competition and How Great Companies Can Catch Up and Win. Mcgraw-hill.

[22] Senge, P. (1990). The Fifth Discipline: The Art and Practice of the Learning Organization. Doubleday/Currency.

[23] Allspaw, J. (2012). Blameless PostMortems and a Just Culture. Retrieved from https://codeascraft.com/2012/05/22/blameless-postmortems/ (last access: 20.08.2018)

[24] Robbins, J., Krishnan, K., Allspaw, J. & Limoncelli, T. (2012). Resilience Engineering: Learning to Embrace Failure. ACM Queue 10 (9).

[25] Kim, G., Debois, P., Willis, J., Humble, J. & Allspaw, J. (2016). The DevOps Handbook: How to Create World-Class Agility, Reliability, and Security in Technology Organizations. IT Revolution Press.

Images

Figure 1: Own illustration based on [15,17,18]

Figure 2: Own illustration based on [3]

Leave a Reply

You must be logged in to post a comment.