(Originally written for System Engineering and Management in 02/2020)

Introduction

In the System Engineering course of WS1920, I took the opportunity to look into automating the build process of a Windows desktop application. Specifically, the application in question is built in C#, targeting .NET Framework 4.0 and using Windows Presentation Foundation (WPF) for the user interface. The git repository is hosted on GitLab.com, making this environment the primary focus of this research.

The main objective of this project was to set up a low cost and maintenance build pipeline for a Windows desktop app, but also to gain a better understanding of GitLab’s CI/CD system as well as Microsoft’s .NET world, both of which I had dealt with before, but never in great depth. Ideally, the end result would come without any financial cost, so that any private person, without a budget to spend on a build server or other payed services, would be able to use it.

Infrastructure for Continuous Integration

When developing an application, especially in a team, it can be helpful to continuously build and test the application with the newest code contributions. It helps find errors, both in the application logic as well as in the build process faster, and by extension, enable a more fluent development.

This practice is part of the Continuous Integration (CI) workflow, which, as formulated by Jez Humble, consists of three main principles: [1]

- daily commits to the mainline (e.g. master branch)

- automated building and testing after each commit

- if an error is detected, it needs to be fixed within ten minutes

To allow this kind of workflow, it is essential to have the infrastructure to automatically build the application from a source code repository. Today, most Git providers, like GitLab, GitHub or BitBucket, provide their own CI infrastructure, including a pipeline system and running agents (Runners). With this, CI can be used without the need for a dedicated build server.

While GitLab is the focus of this project, for the sake of completeness, a quick overview of the CI systems of the three major git services, GitHub, Bitbucket and GitLab will be given in the following section.

In general, a CI pipeline is configured and enabled by placing a .yml file in the root of the repository, containing configuration and defining one or multiple pipeline stages. The name and format vary across the different platforms, but the principle is ultimately the same.

GitLab CI/CD

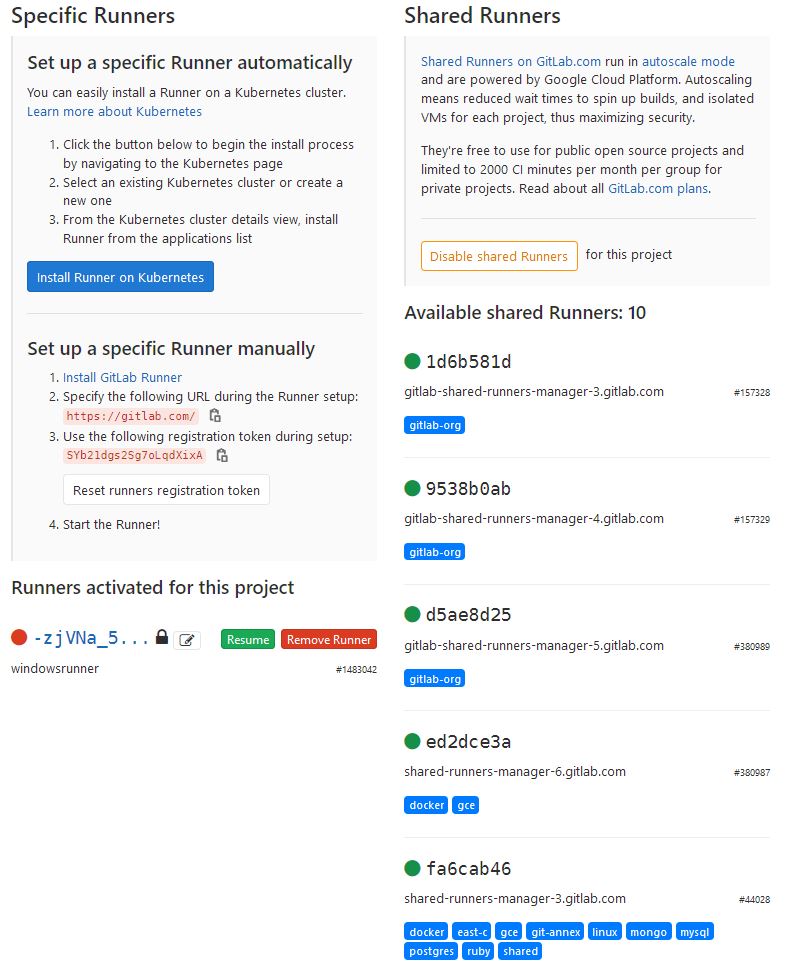

In case of GitLab.com, there are shared Runners with different capabilities. These can be viewed by accessing the CI/CD menu in the project settings. The right side in the following image shows the GitLab shared Runners, on the right side, own Runners can be configured.

Every Runner has a set of associated tags, which can be specified in a CI config to ensure that the pipeline gets executed by a runner that acutally has all the needed features. (Not to be confused with git tags – those can also be listed in the config to filter the commit events that trigger a pipeline!)

Example:

build:

tags:

- dockerIn practice, if there are no tags specified, pipelines seem to run on a Docker-capable runner most of the time.

CI configurations usually specify a docker image, which is loaded by the runner to provide a suitable environment.

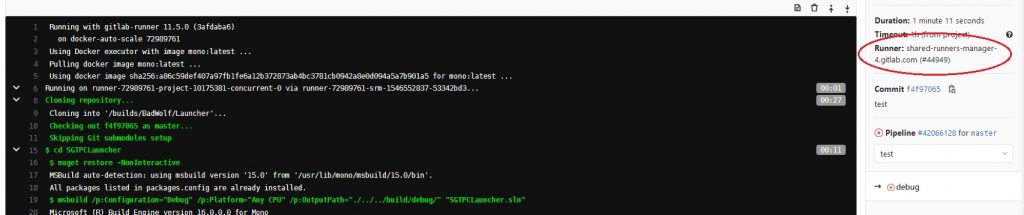

The runner that is assigned to any given job can be seen in the Job’s log view:

GitLab’s free plan includes 2000 CI pipeline minutes per group per month [2].

GitHub Actions

GitHub introduced Actions in 2018. Compared to GitLab’s CI/CD pipeline system, GitHub’s Actions support automation of a more wide range of workflows. Explicit support for CI was a much requested feature that has been added to the existing system in mid-2019 [3].

While GitLab’s CI execution is directly tied to commit events, GitHub’s Actions can be triggered with any kind of event, such as issue-related events, webhooks, or simply on a cronjob-like schedule. A full list of events can be found here: [4].

On GitHub, a runner environment is not selected by tags, but instead directly given through the config file. GitHub provides hosted runners for all major operating systems: (Ubuntu) Linux, Windows, and macOS. Using Docker containers is also possible.

GitHub’s free plan includes 2000 Action minutes per month [5].

Bitbucket Pipelines

Bitbucket Runners can be selected neither explicitly nor implicitly, only a docker image can be chosen as an environment. Along with the fact that Bitbucket doesn’t ("yet") support building on Windows or macOS [6], this suggests that they only have one kind of runner configuration.

Compared to the other git providers, Bitbucket’s free plan only includes 50 CI minutes per month [7].

The .NET world

The term .NET has had several meanings over time. In the early 2000s, it was used as a marketing brand for all new Microsoft releases (VisualStudio.NET, WindowsServer.NET, etc.). It was later changed to mean the software platform and runtime environment as which it is known today.

Similarly to Java, .NET applications run in a virutal environment. .NET also has a dependency manager called NuGet, which is to some degree comparable to Maven in Java.

Currently, there are three main implementations of .NET. One term worth mentioning in this context is the .NET Standard, basically the common denominator of all .NET variations. An overview can be found here: [8]. Microsoft plans to unite all the different variants under .NET 5 towards the end of 2020 [9].

- .NET Framework: Microsoft’s proprietary, Windows-only implementation of .NET.

- Mono: A cross-platform, open source implementation of .NET. Today, it has specialized on targeting mobile devices as part of the Xamarin/Mono runtime. Mono does not support WPF, nor are there any plans to include it in the future [10].

- .NET Core: A newer cross-platform, open source implementation of .NET, targeting Windows, Linux and MacOS. Contrary to Framework, it is possible to have multiple different versions of Core installed at the same time. Support for the Windows desktop frameworks WPF and WinForms has been added with the recent update to version 3.0 in 2019.

Attempt 1: Building on .NET Framework

Since my application is built for .NET Framework, naturally, the first approach was to create a corresponding build environment, so that there wouldn’t be the need to make changes to the application.

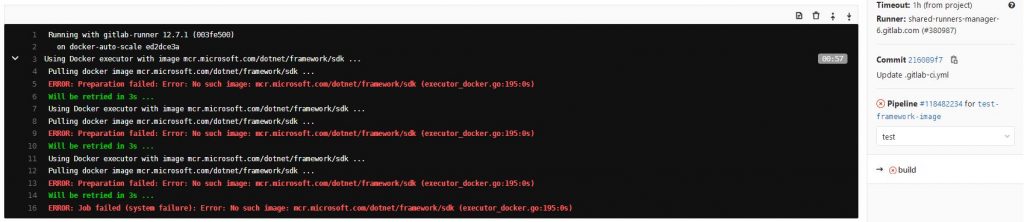

Microsoft does provide a .NET Framework SDK Docker image[11], however, this only seems to work with a Windows-based Docker host. Attempting to run it on a Linux machine results in a hard error:

Docker: no matching manifest for linux/amd64 in the manifest list entries.

When using it in the GitLab CI configuration, the image can simply not be found:

Attempt 2: Porting and Building on .NET Core

With the .NET Framework Docker image not working on GitLab Runners, .NET Core appears to be an acceptable alternative: Since .NET Core focuses on cross-platform applications, it makes sense to think that the SDK Docker image[12] would be able to run in the GitLab Runner environment.

However, .NET Framework applications need to be ported to .NET Core. Luckily, this process isn’t too complicated for small scale apps such as this. There are plenty of guides and tutorials explaining the porting process. I particularly followed this one, which explains everything pretty well: [13].

Basically, it boils down to the following steps:

Make sure that the project uses the package reference format.

The.csprojfile is the XML based project file of .NET. It includes the project’s settings and properties, such as the target runtime, build configurations, source files and resources to include or exclude, etc.

In .NET Framework, NuGet package references could either be stored in a separatepackages.configfile, or in the newer package reference format inside the.csprojfile. .NET Core, the package reference format is mandatory, so NuGet references must also be stored in the.csprojfile.Verify compatibility of NuGet dependencies, update them if necessary.

Of course, a package that is installed via NuGet must be compatible with the runtime that a project is targeting. Many packages were developed before .NET Core was around, and therefore might only support .NET Core in newer versions, if at all. Depending on the version of the .NET Standard, that a package implements, it may still be somewhat compatible with Core, even if it’s not explicitly labeled as such.Adapt the code to the .NET Core API calls, which may differ from .NET Framework.

There can also be API calls, which don’t create any issues when building, but can behave differently at runtime, than they would with .NET Framework. It’s important to test if all code paths of the application still work as intended on .NET Core.

After porting the application to .NET Core 3.1, and successfully building it on a local Windows machine, it should in theory also build on a Linux-based system like the .NET Core SDK Docker container on GitLab.com.

However, as it turns out, there is still a difference between a .NET Core app in general, and a desktop application specifically. Attempting to build the application on the GitLab Runner, or a local Linux system, results in error NETSDK1100: Windows is required to build Windows desktop applications., which sounds like a pretty solid dead end. Apparently, even if they are supported in .NET Core, WPF (and probably also WinForms) are still exclusively compatible with a Windows build environment.

<hr>

Recap so far:

- The .NET Framework Docker image doesn’t work on the (non-windows) Gitlab Runner.

- Even after porting the application to .NET Core, the fact that the it uses WPF is still locking it to a Windows build environment.

<hr>

Windows build server

With the other options failing, nothing remained but to give up and drop the secondary objective of doing everything purely in GitLab.

Aside from GitLab’s public shared Runners, it is also possible to host own Runners and link them to a GitLab project. To do that, I created a Windows VM in a cloud service and outfitted it with the .NET Core SDK as well as the GitLab Runner software.

Since the original application was written for .NET Framework, remaining on Core wasn’t really necessary. I still wanted to try and follow this path as far as possible, since the Core approach of cross-platform development clearly seems to be the future of .NET. Should that approach fail, switching back to .NET Framework would still be an acceptable solution.

Installing the Runner and registering it to GitLab is pretty straightforward [14]. After installing the service, it basically comes down to

- Entering the GitLab server address (in my case gitlab.com)

- Entering the token that ties it to a specitic project

- Entering description, name and tags

- Choosing an executor:

A pipeline doesn’t necessarily need to run in a Docker container; several alternative executors are available as well, including kubernetes, virtualbox, or simply native shell execution. For simplicity’s sake, I opted for the latter here.

After the setup, it was simply a matter of disabling the shared runners in the project to force the CI pipeline to use the custom one. (I could have also used tags in the setup of my runner to be able to choose it specifically. But since I only had one dedicated runner, and couldt’t use the shared runners, not using tags seemed like a cleaner solution, as it keeps the CI config file a bit shorter.)

In theory, providing the built execuable as a download back in GitLab is simply a matter of adding the path as an artifact to the CI config:

artifacts:

paths:

- "SGTPCLauncher/SGTPCLauncher/bin/Release/SGTPCLauncher.exe"

Publishing on .NET Core

However, building projects into a single executable doesn’t seem to be a trivial thing in .NET Core, as opposed to .NET Framework, where the .exe file can simply be grabbed from the build output directory, and distributed to any compatible system. There are three "build modes" in .NET Core [15]:

Framework-dependent deployment (FDD)

Creates a.dllfile, which needs to be run with thedotnetcommand:dotnet <PROJECT-NAME>.dllThis deployment form depends on the target runtime being available on the system.

Framework-dependent executable (FDE)

Similar to FDD, but also creates an executable next to the.dll, which can be used instead of thedotnetcommand on the.dllfile. This is the default mode in .NET Core 3.Self-contained deployment (SCD)

This option generates a single executable, which contains all necessary .NET Core files needed to run the app, but no "native dependencies". Those still need to be present on the system.

Even with the self-contained deployment, running the executable still resultis in an assembly error.

$ ./SGTPCLauncher.exe

Error:

An assembly specified in the application dependencies manifest (SGTPCLauncher.deps.json) was not found:

package: 'LibGit2Sharp.NativeBinaries', version: '2.0.306'

path: 'runtimes/win-x86/native/git2-106a5f2.pdb'

(This error message was only revealed, when I ran the .exe on the command line. Simply double-clicking the file just didn’t do anything at all.)

This may be caused by some error during the porting to .NET Core, or the fact that LibGit2Sharp, one of my NuGet packages, isn’t directly compatible with .NET Core. It only implements the .NET Standard. LibGit2Sharp.NativeBinaries is a secondary dependency that comes with the LibGit2Sharp NuGet package.

Even explicitly adding LibGit2Sharp.NativeBinaries to the dependency list in the .csproj file, promoting to to a first-level dependency, didn’t make a difference.

Conclusion for .NET Core

At this point, I decided to switch back to .NET Framework, since I could definitely get a functioning build output there. Especially since it’s now clear that there is no way around using a Windows environment to build, staying on .NET Core doesn’t bring any actual benefits in my situation.

As a conclusion, I can say that Core sounds promising, as long as there are no Windows-locked dependencies. The proting process seems easy at forst glance, but complications shouldn’t be underestimated.

I assume that with an app that was built on Core from the beginning, rather than being ported over from Framework, there would have been less problems.

It definitely came as a surprise to me, that despite being supported by Core, WPF is still hard-locked to a Windows build encvironment. While I wasn’t seriously expecting the application to run on a Linux system "just like that", I had at least expected to be able to build it in a non-Windows environment.

GitLab introduces Windows Shared Runners

At the end of January 2020, GitLab has introduced Windows-based shared Runners with the update to GitLab 12.7 [16].

This makes using a Windows Runner as easy as adding the windows tag to the CI configuration. Contrary to the other shared Runners, the Windows Runners use the shell (PowerShell) executor and therefore run the pipelines natively, without the option to specify a Docker image. This means that, apart from some pre-installed tools [17], all other required software needs to be installed in the CI script using Windows’ Chocolatey package manager [18].

While Windows Runners are priced the same as the others (2000 free minutes/group/month for the free plan), this will likely change, once the beta phase is concluded [19].

Finding MSBuild

To build a .NET Framework project, there is no included command line interface like the dotnet command for .NET Core. Instead, there is msbuild, which is included in the Visual Studio Build Tools, or can be installed separately. On Windows, these tools aren’t added to the PATH variable on installation, meaning that on the command line, they can only be accessed using the full path to the executable.

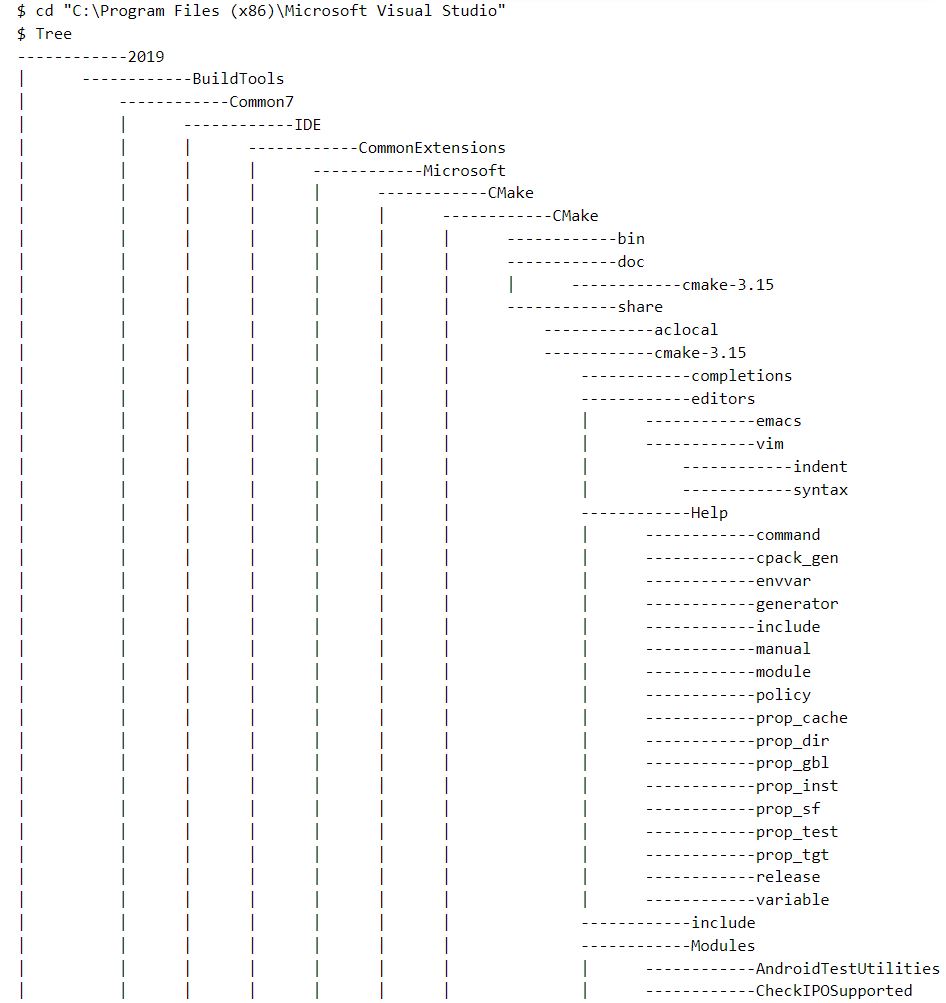

The tricky part was to find out where exactly the msbuild.exe was located, since I wasn’t able to find it in the same location as on my own system. While I could list all occurrences of the file on my local Windows machine by doing Get-Childitem –Path *\msbuild.exe -Recurse (basically the Windows way of find) in PowerShell, when I ran this command on the Runner, it didn’t give any output for some reason.

In the end, I printed the whole directory structure, using the Tree command, and searched the output for the for the msbuild file.

That gave me the following path: C:\Program Files (x86)\Microsoft Visual Studio\2019\BuildTools\MSBuild\Current\Bin\msbuild.exe, which I added as a variable to the CI file:

variables:

MSBUILD: 'C:\Program Files (x86)\Microsoft Visual Studio\2019\BuildTools\MSBuild\Current\Bin\msbuild.exe'

CI Configuration

With that, it was finally possible to run the build command against my project file:

script:

- '& "$env:MSBUILD" SGTPCLauncher/SGTPCLauncher/SGTPCLauncher.csproj'

The & character is PowerShell’s execution operator, which is needed if the executable file isn’t located in the current directory. Otherwise, it could be executed without any prefix or operator. "$env:MSBUILD" resolves the variable defined earlier.

Success at last!

This is the full, final .gitlab-ci.yml file. It includes a few additional steps to build the application in all required configurations, and to prepare the build artifacts for packaging, so they end up in the root of the resulting .zip archive, rather than the archive mirroring the whole directory structure.

variables:

MSBUILD: 'C:\Program Files (x86)\Microsoft Visual Studio\2019\BuildTools\MSBuild\Current\Bin\msbuild.exe'

build:

# These tags ensure that we get a Windows Runner

tags:

- shared-windows

- windows

- windows-1809

# only run this job for updates of the specified branch

only:

- windows-runner-test

# make sure that the nuget dependencies are present and up to date

# clean the build output directory from potential earlier artifacts

before_script:

- nuget restore SGTPCLauncher/SGTPCLauncher.sln

- rm SGTPCLauncher/SGTPCLauncher/bin/ -recurse

# Build the project with different configurations, then

# copy the build artifacts into the root of the working directory, so there won't be any directory structure in the packaged artifact archive

script:

- '& "$env:MSBUILD" /p:Platform="AnyCPU" /p:Configuration="Release" /p:OutputPath="./bin/Release/" SGTPCLauncher/SGTPCLauncher/SGTPCLauncher.csproj'

- '& "$env:MSBUILD" /p:Platform="AnyCPU" /p:Configuration="Release_User" /p:OutputPath="./bin/Release_User/" SGTPCLauncher/SGTPCLauncher/SGTPCLauncher.csproj'

- '& "$env:MSBUILD" /p:Platform="AnyCPU" /p:Configuration="Release_ClosedBeta" /p:OutputPath="./bin/Release_ClosedBeta/" SGTPCLauncher/SGTPCLauncher/SGTPCLauncher.csproj'

- cp SGTPCLauncher/SGTPCLauncher/bin/Release/SGTPCLauncher.exe ./SGTPCLauncher_Dev.exe

- cp SGTPCLauncher/SGTPCLauncher/bin/Release_User/SGTPCLauncher.exe ./SGTPCLauncher_User.exe

- cp SGTPCLauncher/SGTPCLauncher/bin/Release_ClosedBeta/SGTPCLauncher.exe ./SGTPCLauncher_CBeta.exe

# package the following path(s) as downloadable artifacts

artifacts:

paths:

- "./SGTPCLauncher_*.exe"References

[1]: https://martinfowler.com/bliki/ContinuousIntegrationCertification.html

[2]: https://about.gitlab.com/pricing/

[3]: https://www.youtube.com/watch?v=E1OunoCyuhY

[4]: https://help.github.com/en/actions/reference/events-that-trigger-workflows

[5]: https://github.com/pricing

[6]: https://confluence.atlassian.com/bitbucket/limitations-of-bitbucket-pipelines-827106051.html

[7]: https://bitbucket.org/product/pricing

[8]: https://docs.microsoft.com/dotnet/standard/net-standard

[9]: https://devblogs.microsoft.com/dotnet/introducing-net-5/

[10]: https://www.mono-project.com/docs/gui/wpf/

[11]: https://hub.docker.com/_/microsoft-dotnet-framework-sdk

[12]: https://hub.docker.com/_/microsoft-dotnet-core-sdk/

[13]: https://www.youtube.com/playlist?list=PLReL099Y5nRdG-LQ6OZSPECF-eXjgFNrW

[14]: https://docs.gitlab.com/runner/register/index.html

[15]: https://docs.microsoft.com/en-us/dotnet/core/deploying/deploy-with-cli

[16]: https://about.gitlab.com/blog/2020/01/21/windows-shared-runner-beta/

[17]: https://gitlab.com/gitlab-org/ci-cd/shared-runners/images/gcp/windows-containers/tree/master/cookbooks/preinstalled-software

[18]: https://chocolatey.org/

[19]: https://gitlab.com/gitlab-org/gitlab/issues/30834

Leave a Reply

You must be logged in to post a comment.