Riot Games is the developer of a number of big on- and offline games, most notably ‘League of Legends’. League of Legends is a real-time multiplayer online battle arena game, where two teams consisting of five players each fight one another. As the game is a fast past real-time game, split-second decisions can be the deciding factor between winning and losing a game. Therefore, low latency times are one of the core concerns of Riot Games. The Game currently has around 150 million active players world wide[8]. Back in 2014, Riot Games took a closer look at what they needed to ensure that as many of their players as possible get to have the best possible experience. They identified a number of problems and came to an interesting conclusion: They needed to create their very own internet.

The Problems

Real-time multiplayer games, like League of Legends of Riot Games,have very demanding requirements regarding the latency of a connection. If you open up YouTube in your Browser and the site takes one or two seconds to load, that is totally fine. In a real-time game like League however, where split-second decisions and reactions can decide the outcome of a Match, your game taking one or two seconds to receive the newest game state has a huge impact. Therefore, real-time games have great interest in keeping latency as low as possible.

Buffering

Real-time games cannot lessen the impact of latency spikes using methods like buffering. To revisit the previous example, when watching a video on YouTube, it is exactly known what information is going to be requested, allowing the next few seconds of the video to already being buffered. If the latency spikes, the video can still run for a few seconds while it waits for new data to arrive. However, real-time games like League of Legends are not predictable as they are completely dependent on user input. Therefore, a slower response time will always be noticeable.

ISPs

The ‘internet’ is not a single, unified entity. It is made up of multiple backbone companies and Internet Service Providers(ISP). And these companies have vastly different priorities than companies like Riot Games. Riot Games wants their data to take the most latency efficient path. On the other hand, backbone companies and ISP will use the most cost efficient path, even if that one will take longer.

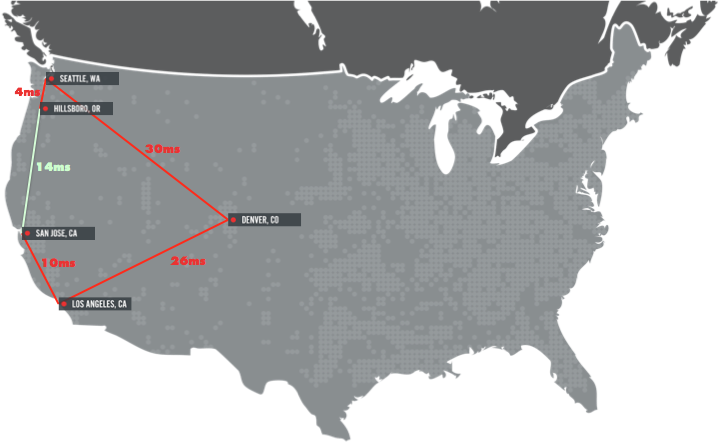

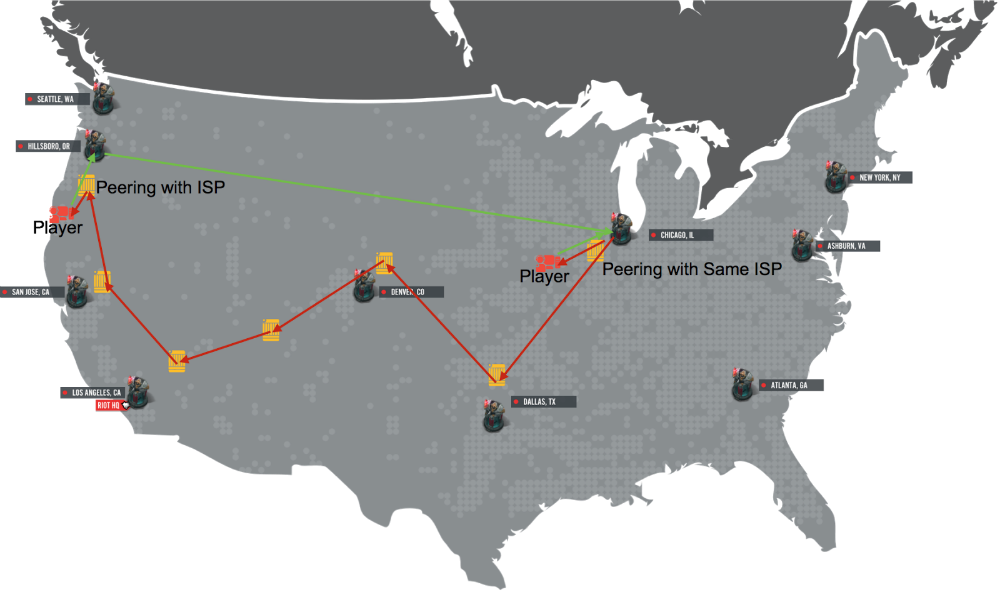

In Figure 1, a real traffic flow of a League of Legends player is shown. The player, in the San Jose area, tries to connect to the Hillsboro area. The optimal connection is marked in light green and would connect directly from San Jose to Hillsboro. In reality, the traffic flows to Los Angeles, over Denver, then to Seattle until finally reaching Hillsboro. In Figure 1, the path is marked in red. The optimal connection (green) would take 14 ms, while the actual connection (red) takes 70 ms, which makes a huge difference.

Routers

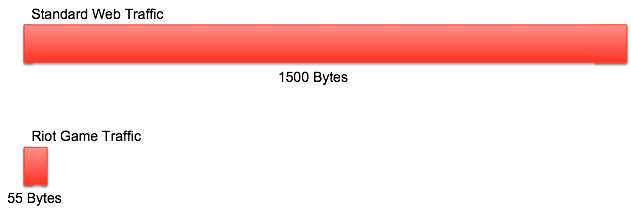

Another problem lies in the size of Riot Games traffic. Most internet traffic is transmitted in 1500 bytes sized packages[2]. In comparison, Riot Games traffic is usually relatively small, around 55 bytes, as it might only contain simple data like the location of a player’s click.

The problem with this size difference is that routers have the same processing overhead for packets of any size, meaning that for the router, every packets costs the same amount of work. To send the same amount of data as standard internet traffic, Riot Games needs to send around 27 packets. From the routers perspective, these packets cost the same amount of work as normal packets, meaning that Riot Games traffic has an 27x cost increase compared to the standard traffic. Furthermore, routers have an input buffer, that could theoretically hold the increased number of packets. But the buffers are not only limited by size, but also by a fixed number of packets, meaning that Riot Games also fills these buffers 27x as fast[3]. If the buffer is full and another package arrives, it will be dropped by the router, resulting in package loss in the game. As a result, a player would experience things like taking damage out of nowhere and similar things.

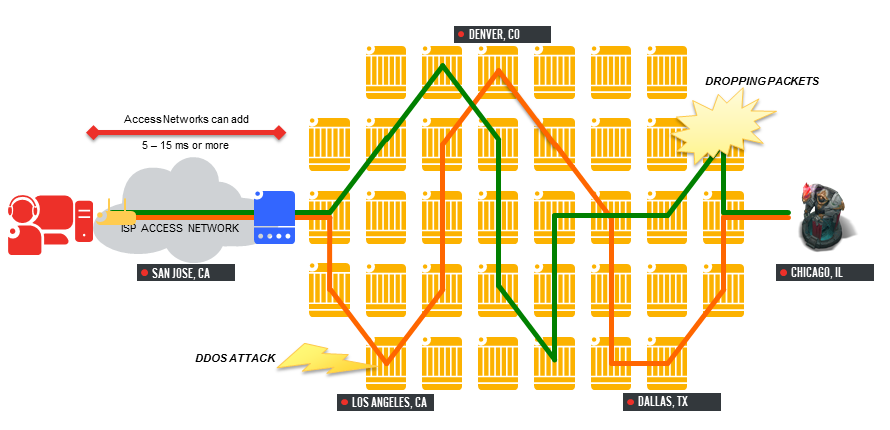

Figure 3 shows a summary of all the problems that can occur while connecting a player to the game server: The traffic from the player first has to go through ISP access networks, is then routed across different routers, which may be already busy, increasing the chance of overburdening the routers, resulting in package loss.

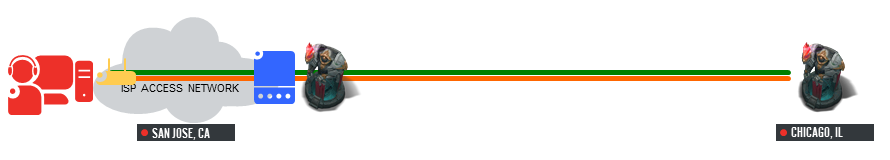

Riot Games saw all these problems and intended to improve the situation. Their solution: Riot Direct, which basically turns the situation shown in Figure 3 into this:

Riot Direct

After identifying all these problems shown above, Riot Games came to the conclusion, that the best solution is for them to create their own internet. The requirements they have are just too different from the ISPs. The ISPs want to have a network with as many routers as possible, to allow for as much traffic as possible, while routing along the most cost efficient path. In contrast, Riot Games wants to route the most time efficient path and also does not need excessive amounts of routers, which would just increase their latency.

So Riot Games got to work and started identifying the fastest fiber routes and co-locations with the most ISPs. They started setting up routers in these co-locations and peered with as many of the other ISPs as possible. Riot Games needed to be connected with as many ISPs in as many places as possible, as users data still needs to first go through their ISPs network to reach Riot Games network. Riot Games not only had to deal with hardware, but also with software side issues, mostly with the standard internet routing protocol, ‘Border Gateway Protocol (BGP)'[5]. The BGP is build for the common use of the ISPs, and therefore has multiple problems with the special requirements of Riot Games network.

One example of the problems with BGP is traffic leaving Riot Games network.

In Figure 5, the incoming (green) and outgoing (red) traffic of Riot Games servers in Chicago is shown. In this case, Riot Games peered with the same ISP in the Hillsboro and Chicago area. Players in the Chicago area of this ISP would enter Riot Games network through the Chicago peering point, and when Riot Games sent traffic back, it would return to the players the exact same route. Players from the Hillsboro area of the same ISP would enter Riot Games network through the Hillsboro peering point. However, when Riot Games tried to send traffic back to those players, the BGP would compute the fastest route from the Riot Games network in Chicago to the ISP network. As they peered with the ISP in Chicago, that meant that the return traffic would leave Riot Games network in Chicago and was routed entirely through the ISPs network, effectively bypassing Riot Games Network. To fix this, Riot Games worked together with the ISPs and had them mark their traffic using ‘BGP Communities'[6]. This allowed Riot Games to create special rules for the return of traffic.

The Results

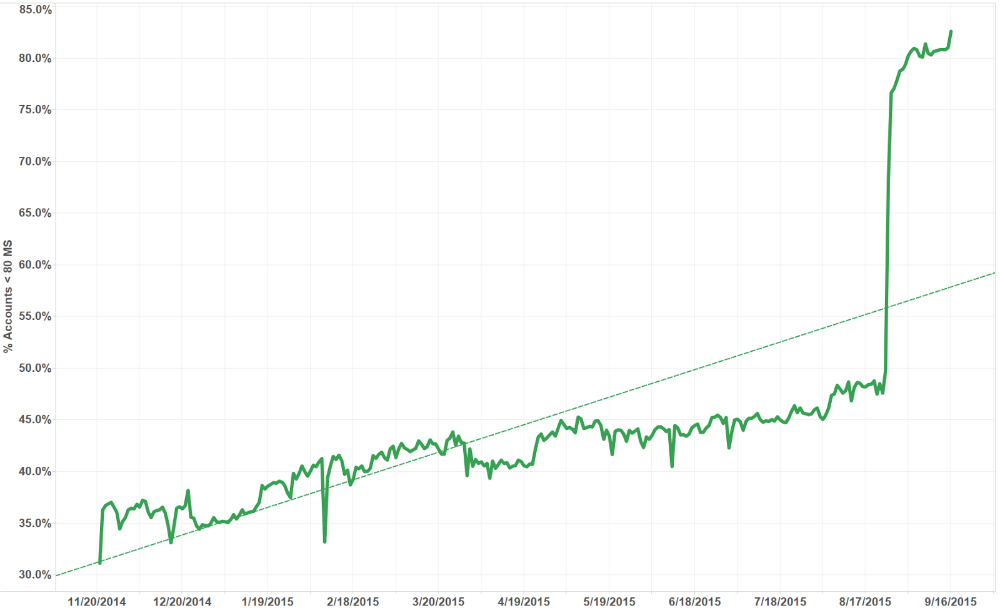

Riot Games released some statistics they used to measure the improvement their changes brought. For the statistics, they measured the number of players with a latency under 80 ms.

As shown in Figure 6, while Riot Games was working on establishing Riot Direct, the percentage of players with a latency of under 80 ms started out somewhere between 30 – 35 %. By the end of their work, that percentage climbed to around 50% of players. Furthermore, their servers used to be located on the east coast, but with the introduction of Riot Direct, they moved their servers to Chicago, a more central location.

The impact of the server move to Chicago is shown in Figure 7. The percentage of players with a ping below 80 ms further increased from around 50% to 80%. All in all, Riot Games managed to increase the playing experience of around 50% of the players in the US, which is a huge success.

Conclusion

The modern internet is always evolving. In the past, every aspect was left to ISPs and backbone companies, which would deliver a one-size-fits-all approach. And for the longest time, this was completely okay. However, the internet has grown grown exponentially, and the requirements that individual companies may have has also greatly diversified. Riot Games looked at the state of the internet of their time, and realized that it simply was not build in the way they needed it to be. As a result, they spent a lot of time and effort to create ‘their own internet’, as close to their requirements as they could. Netflix came to a somewhat similar conclusion with their Netflix Open Connect[7]. And as the internet will continue to grow, even more companies will find that they have their own special kind of requirements for ‘their’ internet, and will continue to influence and adapt the current network to their uses.

References

[1] Peyton Maynard-Koran, “Fixing the Internet for Real Time Applications: Part I”, https://technology.riotgames.com/news/fixing-internet-real-time-applications-part-i (06.03.23)

[2] Wikipedia, Ethernet frame, https://en.wikipedia.org/wiki/Ethernet_frame (06.03.23)

[3] Guido Appenzeller, Isaac Keslassy, Nick McKeown, “Sizing Router Buffers”, http://yuba.stanford.edu/~nickm/papers/sigcomm2004.pdf (06.03.23)

[4] Peyton Maynard-Koran, “Fixing the Internet for Real Time Applications: Part II”, https://technology.riotgames.com/news/fixing-internet-real-time-applications-part-ii (07.03.23)

[5] Wikipedia, Border Gateway Protocol, https://en.wikipedia.org/wiki/Border_Gateway_Protocol (07.03.23)

[6] “Understanding BGP Communities”, https://www.noction.com/blog/understanding-bgp-communities (07.03.23)

[7] “A cooperative approach to content delivery”, https://openconnect.netflix.com/Open-Connect-Briefing-Paper.pdf (07.03.23)

[8] League of Legends Live Player Count and Statistics, https://activeplayer.io/league-of-legends/ (07.03.23)

Leave a Reply

You must be logged in to post a comment.