Tag: secure systems

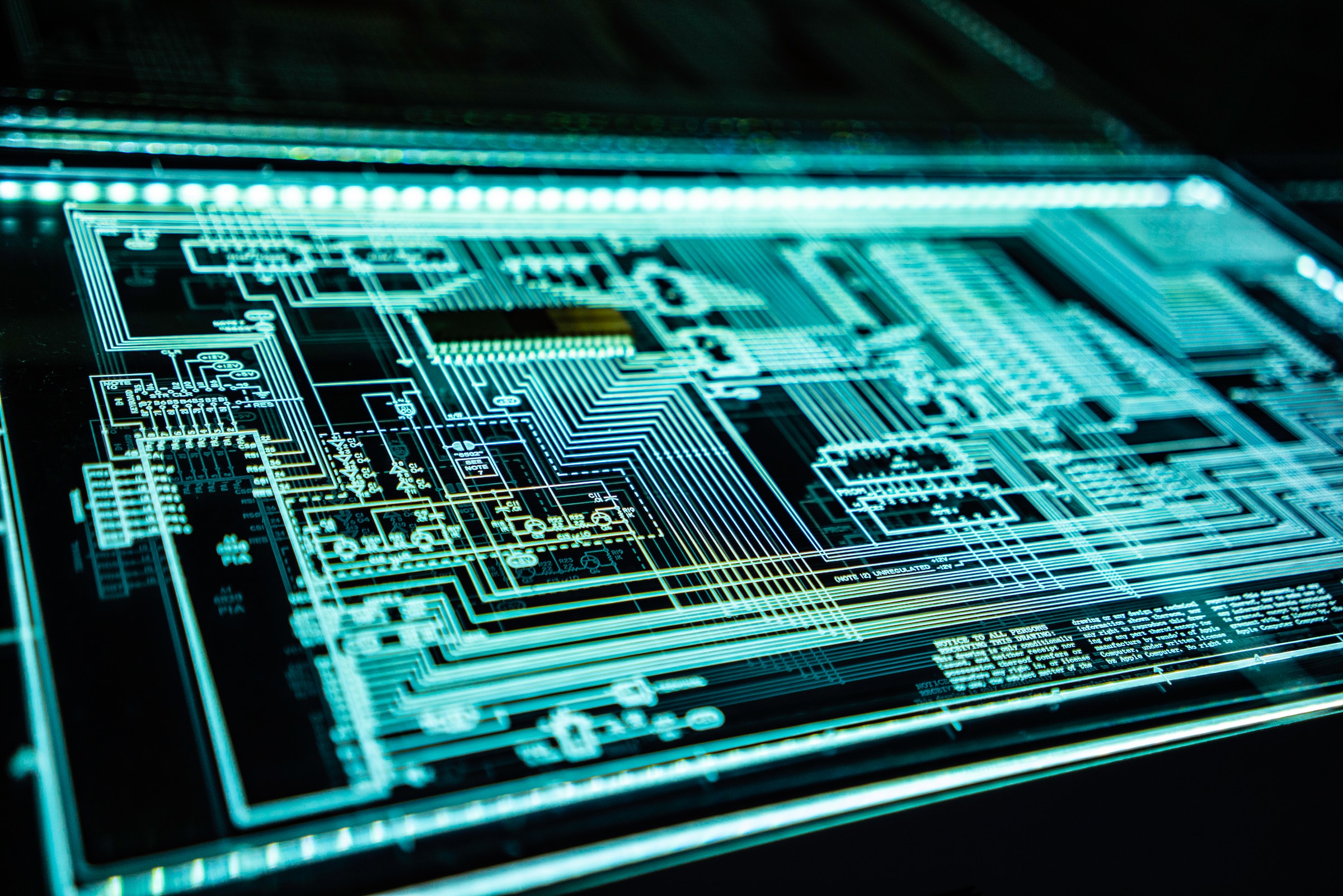

Schutz vor staatlichen Cyberangriffen

Cyberangriffe sind heute ein zentrales Instrument staatlicher Akteure. Stuxnet, SolarWinds, Vault7 oder XZ-Utils sind nur ein paar der populären Fälle, bei denen kritische Infrastrukturen, Unternehmen und Regierungsbehörden im Visier standen. Doch wie kann man sich vor dieser Bedrohung schützen? Warum klassische Schutzmaßnahmen nicht ausreichen Firewalls, Antivirus-Software und regelmäßige Updates sind zwar wichtige Schutzmaßnahmen, doch sie

Secure Systems Podcast – Zero Trust

Mit Audio von Boryslaw_Kozielski Gehören Sie zu den Menschen, die die Kamera ihres Laptops abdecken? Sicherheit geht vor. Absolutes Vertrauen kann man nicht haben. Aber wenn es um die Sicherheit in Unternehmen oder auch im Gesundheitswesen geht, ist dann doch jedes Gerät, das man ins Firmennetzwerk bringt, gleich sicher und vertrauenswürdig. Vielleicht, weil es oft

Cybersecurity Breaches

Sicherheitsrisiken im digitalen Zeitalter: Eine Analyse aktueller Cyberangriffe und ihre Implikationen Data Breaches sind eine zunehmende Bedrohung, bei der Hacker und Cyberkriminelle weltweit nach Möglichkeiten suchen, sensible Informationen zu stehlen. Die Motive für solche Angriffe umfassen finanzielle Gewinne, Prestige und Spionage. Laut dem Verizon Report von 2023 machen technische Schwachstellen nur etwa 8% aller Angriffsmethoden

Browser Session Hijacking

This article outlines the dangers of insufficiently protected browser session cookies, how they work, how they can be hijacked and what to do to avoid it.

Worldcoin / World ID

Einstieg Eine Firma namens “Tools for Humanity GmbH” entwickelt Tools, um das Internet menschlicher zu machen, den Weg für ein weltweites bedingungsloses Grundeinkommen zu ebnen und unabhängig prüfbare Wahlen zu ermöglichen. Das Ganze auch noch Open Source, für alle, und Werte hat sie auch noch, diese Firma. Doch bevor wir uns nun alle dort bewerben,

The hardest boss in Dark Souls: A secure multiplayer

Over the course of the last decades, video games have continuously risen in popularity. Today, hundrets of thousands of people play video games every day. Many of these games have multiplayer, where an enormous amount of people play together at the same time. When thinking about ‘hacking’ in multiplayer games, most people will think of

- Allgemein, Artificial Intelligence, ChatGPT and Language Models, Ethics of Computer Science, Secure Systems

Brechen der Grenzen: ChatGPT von den Fesseln der Moral befreien

Abb. 1: Hacking-Katze, die dabei ist, ChatGPT zu jailbreaken, Darstellung KI-generiert “Ich würde lügen, würde ich behaupten, dass kein Chatbot bei der Erstellung dieses Blogeintrags psychischen Schaden erlitten hat” – Anonymes Zitat eines*r CSM-Studierenden 1 Einleitung 1.1 Bedeutung des Themas und Relevanz in der heutigen digitalen Welt Seit 2020 das Large Language Model (LLM) GPT-3

- Cloud Technologies, Design Patterns, Secure Systems, System Architecture, System Designs, System Engineering

High Availability and Reliability in Cloud Computing: Ensuring Seamless Operation Despite the Threat of Black Swan Events

Introduction Nowadays cloud computing has become the backbone of many businesses, offering unparalleled flexibility, scalability and cost-effectiveness. According to O’Reilly’s Cloud Adoption report from 2021, more than 90% of organizations rely on the cloud to run their critical applications and services [1]. High availability and reliability of cloud computing systems has never been more important, as

Optimierung der Investitionen in Cyber-Security

Vier Millionen und dreihunderttausend Euro – dies ist der durchschnittliche Preis, den deutsche Unternehmen für ein einzelnes Datenleck zahlen müssen. Und dies ist nur der monetäre Schaden. Der Vertrauensverlust der Kunden und der potenzielle Verlust von Geschäftsgeheimnissen können einen bleibenden Schaden verursachen, der weit über die unmittelbaren Kosten hinausgeht. Dies sind die Fakten, die der

E-Health: Die Lösung für das deutsche Gesundheitssystem?

Willkommen im deutschen Gesundheitswesen, das für seine Effizienz und qualitativ hochwertige Versorgung bekannt ist. In Deutschland basiert die Krankenversicherung auf dem solidarischen Prinzip, bei dem jeder in die Versicherung einzahlt, um im Krankheitsfall abgesichert zu sein. Allerdings gibt es auch Herausforderungen, wie lange Wartezeiten für bestimmte Fachärzte oder die Bewältigung der Bürokratie im Gesundheitssystem. In