Sadly today’s security systems often be hacked and sensitive informations get stolen. To protect a company against cyber-attacks security experts define a “rule set” to detect and prevent any attack. This “analyst-driven solutions” are build up from human experts with their domain knowledge. This knowledge is based on experiences and build for attacks of the past. But if any attack don’t match the rules, the secure system don’t recognizes it and the security is broken.

The question is: Is there a possibility to train a model based on past attacks to predict further attacks?

For a company it’s really hard to define a rule set to protect all kind of attacks. Also the world is changing every day and the attacks are evolving too. In addition the environment of a company get more scaled and distributed because of mobile networks, clouds and Internet of Things (IoT).

This blog post demonstrates a potential way to create a security system based on machine learning in theory. Finally this post present a real world solution by a group of researchers from MIT’s Computer Science and Artificial Intelligence Laboratory (CSAIL) and the startup PatternEx. This solution allows to detect attacks in real-time merged with the knowledge of security experts.

Big picture: Machine learning

“Well-posed Learning Problem: A computer is said to learn from experience E with respect to some task T and some performance measure P, if its”

T. Mitchell , 1997

Machine learning is a science field of Artificial Intelligence (AI) and Pattern Matching. That gives a computer the ability to learn without being explicitly programmed (A. Samuel, 1959). A machine learning algorithm gets a specific task. In our case this algorithm need to detect attacks based on the collected data from an existing security system. The machine learning model is searching for attack patterns and gains experience over time. After many iterations of learning the model creates an output for each event in the dataset and validates one by one with some performance measures like the accuracy.

To learn more about machine learning click here.

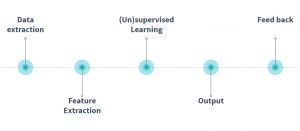

The Machine learning flow for secure systems

In current big networks a lot of monitoring takes place. A company use many resources to collect and store all logs in their internal databases. There are tons of collected events every day. These events need to be analyzed and the secure system need to detect attacks. This collected big data can be used as an input for a machine learning model to learn attack patterns.

Overview of the machine learning workflow;

There are five steps to set up a machine learning pipeline for a security task. First of all the researchers need to collect all available data for the later model. This data are assembled from server syslogs, network traffic, end-points data, external DBs and many more. The more data the better the result.

Based on this data Feature Extraction takes place. These features allow pattern matching on all data sets to detect an cyber-attack by using a machine learning algorithm. Some features could be the IP-address, the port number, the transmitted data, the timestamp or the device type.

The third step in the machine learning flow is the training of the model. A machine learning model fall into one of two categories: Supervised learning with labeled data. All events in the input need the label “attack” or “no attack”. The label is used while the training compares the predicted- with the real-label. The other category is unsupervised learning without any label. The algorithm compares events with each other and search for abnormal patterns in the incoming datasets.

After the training an output is generated. The result is transparent and allows investigation and explanations, such as the triggered source of the attack or the most important features of the attack pattern.

To improve the accuracy of the model the system gets tuned based on customers feedback which all previous steps can be adjusted and modified. In the end the pipeline are customized for a security systems task mounted on the individually customer network.

Challenging aspects

To set up a machine learning pipeline for a security task is very difficult. There are a lot of challenging aspects to take care of:

- Anomaly Detection: A real world application only has access to unlabeled data, therefore the model must learn abnormal pattern and outlier events. This fact leads to an unsupervised learning algorithm.

- High cost of errors: On the one hand the model need to find all cyber-attacks and on the other hand a model which trigger too much false positives ( = normal event detect as an attack) is not used in practice.

- Data is not public: Training an algorithm for secure systems is very difficult because external persons don’t get access to the necessary data. So the researchers must be physically present in the company.

- Semantic gap: The detection of an attack is not enough, we also need to know the source, the damage, the period of time ect..

- Evaluation difficulties: For an two-class classification problem a machine learning model need half data from each class. The problem is that the data normally includes very few attacks. So the model must learn a compression-pattern with very low reconstruct errors for normal events. If an event has a big reconstruction error the model detect a potential attack.

- Adversarial environment: The attacks and the network chang or evolve every day and the algorithm need to take care of this in real-time. So the machine learning pipeline must compare events over different periods of time.

- Real time: An attack must detect in real-time in a distributed and very large system. That means the whole pipeline must be updated in a short amount of time without any break.

The best way to solve this problems is to combine the knowledge from security experts and machine learning experts. This experts need to understand the data at all and share the knowledge to build up a clear environment for the algorithm.

AI²: Training a big data machine to defend

Researchers from MIT have developed a machine learning algorithm for secure systems in April 18, 2016. It demonstrates how an artificial intelligent platform called AI² predict cyber-attacks in a real world network. The goal from AI² is to defend a network of an organization and predict attacks in a minute-to-minute period of time.

The results from this system are quite amazing. The algorithm detect 85% of all attacks, which is three times better than previous benchmarks. The system also found some not known hidden attacks in the data. Another important result is that the false positives are reduced by the factor of 5. The used data are merges from 3.6 billion log lines which were generated by millions of users in about three months. The most important aspect is that the system combine the powerful pattern matching of machine learning and the domain knowledge of intern security experts.

In the first step of AI² an unsupervised learning algorithm searches for abnormal pattern based on defined features of the stored events. Every detected anomaly could be a potential cyber-attack. After that these anomalies are presented to human analysts in a ranking system. The analysts decide which of this anomalies/events are cyber-attacks and which are normal events in the network. This generated feedback is used as an input for another second machine learning algorithm (supervised learning). This second algorithm learns the pattern of the detected attack. Now the output of both algorithms are merged afterwards in the ranking system. Because of this combination the system learns the pattern of an attack based on the knowledge of security experts. The labeling is tricky because of the manually labeling for events by human analysts. The quality of a label depends on the knowledge from the experts. So you need very skilled security experts.

AI²: Overview of the AI²-driven cybersecurity prediction;

For the challenge of evolving attacks AI² uses three different unsupervised-learning methods for the detection of anomalies. All predicted abnormal events are fused together in the ranking system and sorted in the top k events. This leads to the advantage if one of this three algorithms isn’t able to predict an attack event as an anomaly.

In practice on the first day of training this system picks the 200 most abnormal events and experts decide which of them are attacks. Over the time the system improves the accuracy. So it identifies more and more events as attacks. That means that the experts only need to look on 30-40 events per day, because the system has learned the pattern of attacks.

The researchers of AI² say that the system can handle billions of log lines per day, transforming them into new datasets every minute. Every dataset is splitted into features which are needed to predict abnormal events in the networks.

All in all the system is learning the pattern of cyber-attacks which are labeled by security experts and the amount of abnormal events per day reduce for each known pattern. “That human-machine interaction creates a beautiful, cascading effect” one author Veeramachaneni says.

But the system is not perfect at all. Only 85% percent of attacks are predicted in this system not 100%. Also the system produces 4.4 % false positives. This is quite a lot if the system are used on billions of log-lines per day. A company need very good security experts which decide which event is an attack and which not. Every mistake is very risky and maybe leads to a security break.

For more informations about the algorithm you can read this paper.

Attacks against machine learning

Keep in mind that hackers always search for security gaps in a network to break through the defense. If a system uses machine learning for defending the attackers maybe try to manipulate this algorithm or the data:

- Poison attacks: Manipulate the data to change the decision boundary of the algorithm. In this case the machine learning algorithm thinks that an attack is a normal event.

- Evasion attacks: If the attackers know the employed algorithm they adjust the attack for this specific machine learning algorithm so that the algorithm can’t find the attack anymore.

- Evolving attacks: In a dynamic machine learning algorithm all events are used as an input for the next training epoch. This creates an attack surface if the attack pattern is changing slowly. A hacker could create many events to changes the decision boundary of the prediction task.

Scientific questions

The following scientific questions could be derived from this blog post:

- A big impact for a better result of machine learning algorithms are balanced classes. For this reason an algorithm for secure systems need round about 50% attacks and 50% normal events. To get more valid attacks the researchers could create a honey pot and try to collect more different attacks. Could this honey pot a chance to catch the attackers?

- More researchers can build new machine learning or deep learning algorithm for secure systems if companies create an open source repository with their network data for universities. So academies could offer courses in machine learning for secure systems. Maybe this create a new field of study with good educated experts?

- A big security risk is the high rate of wrong labels. What happens if an attack is labeled as a normal event by a security expert? One problem could be that a company trust the algorithm too much so that the damage of an cyber-attack is very high until the experts notice it.

- In a real world network an extreme large amount of events takes place in every second. If an algorithm produces only 1% false positives are that still too much events. A company will not use an algorithm that throws this much critical events each second. So how handle this false positives?

- The presented machine learning pipeline needs data from the past. But what if a start-up like to use such an algorithm? Each company has a different network with different hard- and software. Is there a way to create a network-data independent machine learning pipeline? Maybe a software-as-a-service defense system based on machine learning?

- The algorithm learns attack pattern from already known attacks. In some cases this attacks are successful and the company takes damage. What if machine learning is used to detect attacks before the system takes damage? An algorithm could detect user behavior and predict criminal actions before the attack takes place.

- Also a company can use machine learning to find security gaps in their own defense strategy. An algorithm can be used to attack the own system and explore the way to break through the defense boundary. After a gap is found the security experts could close it.

Sources

- Paper – AI² : https://people.csail.mit.edu/kalyan/AI2_Paper.pdf

- Paper – Machine learning security: http://people.eecs.berkeley.edu/~tygar/papers/Machine_Learning_Security/asiaccs06.pdf

- Paper – Machine learning identify a botnet: http://www.ir.bbn.com/documents/articles/lcn-wns-06.pdf

- Paper – Collection: https://www.ll.mit.edu/mission/cybersec/publications/Cyber-CompNetworkOps/machine-learning-Security.html

- Research – McAfee Labs Threats Report March 2016: http://www.mcafee.com/us/resources/reports/rp-quarterly-threats-mar-2016.pdf

- Research – MIT News AI²: http://news.mit.edu/2016/ai-system-predicts-85-percent-cyber-attacks-using-input-human-experts-0418

- Research – ML in Cyber Security: https://www.youtube.com/watch?v=G2BydTwrrJk&t=2778s

- Research – A dangerous Mix: http://resources.infosecinstitute.com/cybersecurity-artificial-intelligence-dangerous-mix/

Leave a Reply

You must be logged in to post a comment.