Related articles: ►Take Me Home – Project Overview ►Android SDK and emulator in Docker for testing ►Automated Unit- and GUI-Testing for Android in Jenkins ►Testing a MongoDB with NodeJS, Mocha and Mongoose

This article will run you through the motivation for a continuous integration and delivery, choosing a corresponding tool and a server to run it on. It will give you a brief overview over IBM Bluemix and kubernetes as server solution and then discuss the application for a virtual machine inside a company. There are some useful instructions (for beginners) on generating key-pairs for the server on Windows. Next there is a motivation why to run Jenkins (the CI tool of choice) as docker container and gives some instructions to get started. Finally, frequent problems are discussed which hopefully save some of your time.

Intro

In the last years, agile methods like Scrum have become very popular. The main principles, (aka as ‘The Agile Manifest’) are individuals and interaction over processes an tools, collaboration with the customer over contracts, responding to changes over a strict plan and – most important in context of this blog entry – working software over comprehensive documentation. Well, let’s not go into details with the importance of documentation but focus on the “working software”. One might have heard of the iterative incremental model in agile methods, especially in Scrum. Iterative as development in iterations, e.g. in sprints. After each iteration an increment, a “workings software”, a compileable, runnable and shippable artefact is produced. This is important in different points of view: the customer can look at the product as it is right now and so can be part of the process. Also, there is less documentation needed during development as one can look at working features and the danger of developing something the customer doesn’t want is minimized. And finally, there will not be a long and tedious integration and deployment/delivery phase at the end of the project.

To be able to ship an artefact after each iteration (e.g. each two weeks) we somehow need to automate this process. This is the birth of DevOps. DevOps is the merge of development and operations with the goal to test, build and release software fast, frequently and reliable. This leads to an automated toolchain, in which a single change in code by a programmer can result in a new production release.A programmer now has impact on a productive system and needs to think about configuring and running the software securely. We will setup a server for this purpose and install a CI tool.

Choosing a continuous integration/delivery (CI/CD) tool

A popular (most popular?) continuous integration tool is Jenkins, former known as Hutson. Before looking into the problem of hosting and setting up your Jenkins, you should be sure Jenkins is the right choice. Most recently the big git repository suppliers (gitlab, bitbucket ect.) ship with continuous integration and deployment out of the box. The big advantage: you do not have to care about maintaining the CI/CD server and software. Jenkins is (today) still the more mature tool, simply because it’s wide spread, open source and has a lot of plugins.

There is another article on choosing the right tool here.

While looking for a suitable server to setup Jenkins we were looking into Bluemix (with a free education license), a KVM based VM (Ubuntu) hosted by our university and into scaleway, one of the many server suppliers you will find by googling something like “online server”.

Looking for a server: Experience with Bluemix and Kubernetes

tl:dr Bluemix is overkill for a student’s project, and overkill for a small application, in which load balancing, scaling and worldwide distribution is not important. Same counts for Kubernetes.

First you will have to register with IBM. There is an education program amongst others – but you will get only restricted access to the features.

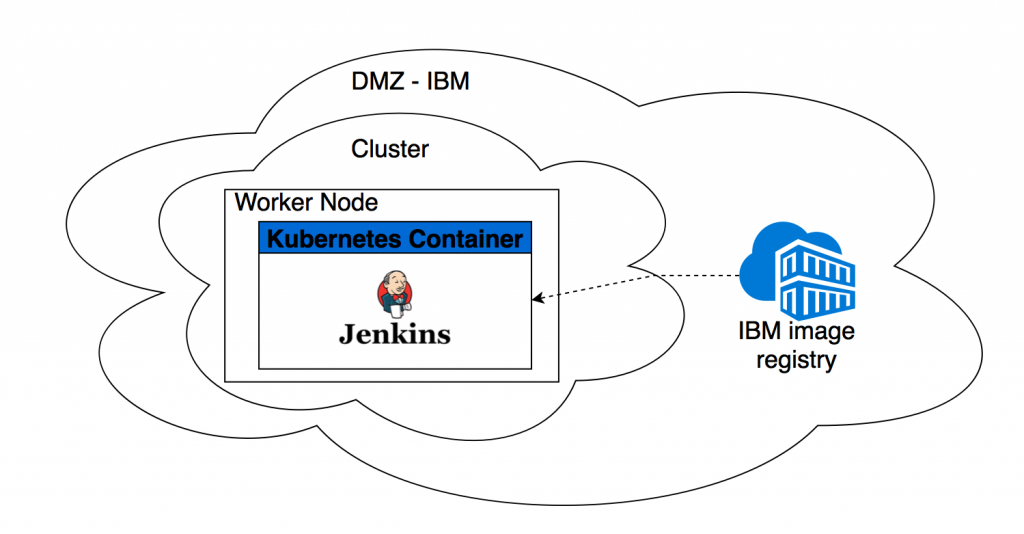

Figure 1 – Layers setting up Jenkins on bluemix IBM. DMZ = Demilitarized ZoneOnce registered, you can create a free lite-cluster. In a cluster you have worker (nodes) – well, with the lite-cluster its only on node. Inside this node you can deploy several containers. To deploy a container, you must upload an image to you own IBM image registry (you might know Docker Hub – it’s something like that). Once the image is uploaded, you can use Kubernetes to deploy a container of your image, see Figure 1.

For the interaction with Bluemix install the Bluemix CLI and Kubernetes CLI. Here is a detailed description for the image upload, and here one for the Kubernetes installation.

There are two things you have to deploy with Kubernetes: your server container (Tutorial) and a service, which maps the internal ports (inside the node) to external reachable ports (Tutorial).

See here for some simple example yaml files https://github.com/cpflaume/bluemix-scripts.

So far so good. The only thing that tipped us off with the free Bluemix account is, that you cannot persist any data – this feature is hidden behind a paywall. Buying data (50GB for $2/Month) sounded not too bad, but don’t! Even if you buy such a volume, you are not allowed to connect it to your free cluster. There exists a workaround we did not get into detail with, see here.

Conclusion: Quite a setup overhead, does only make sense if you plan something big.

Looking for a server: Virtual server hosted by you company (or your university)

tl:dr The biggest drawback using resources you are not able to administrate yourself is time. Any problem will result in a call/ticket/email and it will take days until these problems are fixed. Besides that, it is a good solution, as you can make nearly everything possible and your server is a real virtualized server and not “only” a docker container (there is a difference!).

Request a server

Find out who is responsible and make sure to keep in with this person. Most properly you will have further requests and problems you need help with. You might be asked to provide a public ssh key – this key will be added to the ~/.ssh/authorized_keys file. This allows the user (e.g. root) to log in without a password, but by proofing the possession of the private key. The good thing is, that you do not have to exchange any password with you server administrator and there is no problem with unsafe passwords.

Generating a key pair

On windows I recommend to use the PuTTY Key Generator (Look here for puttygen.exe )

Open the PuTTY Key Generator, click generate and move your mouse in the field under the progress bar (adds randomness). Add a key comment that helps you to remember, what you generated this key for.

Your public key in OpenSSH format is displayed in the top field.

Click “Save private key” and store it to a location you will find it again and you never lose it. It’s a “PuTTY-User-Key-File” and contains private and public key.

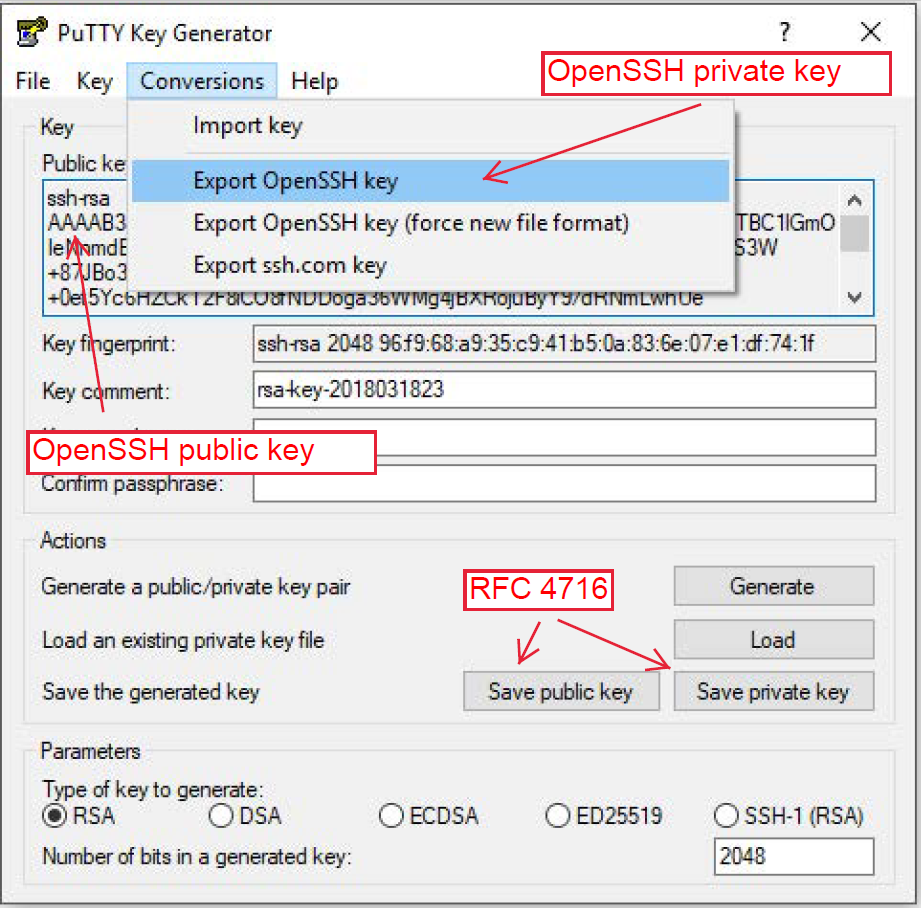

Note: There are different formats for public and private keys. The most common one is the OpenSSH format. If you save a key with putty, it’s NOT the OpenSSH format, but in the “The Secure Shell (SSH) Public Key File Format” (RFC 4716) format. If you need the OpenSSH private key, you have to export it correspondingly (Conversions à Export OpenSSH key) and for the public key just copy the shown text.

Figure 2 – Converting and extracting keys in different formats

How to tell which format

Public OpenSSH key example:

ssh-rsa AAAAB3N … bmhSw== rsa-key-2018-comment

Public RFC4714 key example

---- BEGIN SSH2 PUBLIC KEY ---- Comment: "rsa-key-2018-comment" AAAAB3N … bmhSw== ---- END SSH2 PUBLIC KEY ----

If you have a Linux machine just use the command line tool “ssh-keygen”.

Log onto the server with Putty

I still assume you use windows. If you haven’t already download Putty – it’s an SSH Client. Enter the host name (or ip address) of you server. The standard port is 22, connection type SSH.

Tip: If you add the username of the user you want to use to login as prefix to the host name, you do not have to type it every time, e.g. root@myserver.de

Now you must provide the private key file to use for this connection.

Click on the + left to Connection -> SSH.

Click on “Auth”

Click on “Browse…” and add the path of the private key file stored before.

Now go back to Session by clicking on “Session” (top entry in the left menu, you might have to scroll). Before you open the connection, you want to save it for the future. Type a name in the field under “Saved Sessions” – I always use the hostname. Then press “Save”.

Finally: Click “Open” – and you should be connected to you server.

Tip: For establishing a new connection, just double click on the saved session.

Jenkins as docker image

Even though we are on a real server now we decided to deploy our Jenkins as Docker container. We need to think maintainability: a new version of Jenkins? No problem, kill the old container, start a new one. Move to another server? No problem, copy the user data and start the docker container on another server.

There are two things to do: First, you need to make sure your data will not get lost. Achieve this by mounting the directory Jenkins stores all the information about jobs etc. to a directory on your server. If you kill the docker container now, the user data will still be persisted. Second, you must not install anything manually inside the docker container – because it will be gone after you kill the container. Instead, you need to change the image (the base of the containers). This is easy: you can write a Dockerfile and build an image from it. Our Dockerfile and image can be found here https://hub.docker.com/r/connydockerid/jenkins-image-nodejs-android/. By documenting all required changes in the Dockerfile you will never get in a situation, in which upgrading or moving you server will become a nightmare because of all the hacky changes made nobody knows of anymore.

Note: The only thing you might have to look after when moving your server is the authorization and authentication with other servers. In our case we must authorize only with Heroku – the platform we use for continuous delivery.

Docker explained in two paragraphs

Docker images are like prepared operating systems with some software already installed. You can assemble you own images by choosing an existing image as base and extending it. You do this by writing a Dockerfile and telling docker to build an image after these instructions.

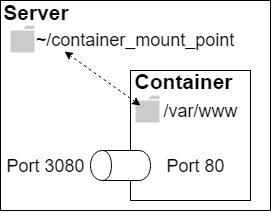

Figure 3 – Docker Container mounts a directory on the host and maps to port 3080 on the host. A corresponding docker command could be: docker run -p 3080:80 -v ~/container_mount_point:/var/www jenkins/jenkins:ltsDocker containers based on an docker image. By running a container, you start an instance of the image. A container behaves like another server/machine. Things to configure a container with: mount a volume to persist/access data, configure the network (e.g. map ports), run commands inside the container, e.g. via an interactive shell.

Install docker

I don’t want to go into detail. There is detailed description on https://docs.docker.com/install/ for all common operating systems.

If you are new to docker, here is a useful cheat sheet: https://www.docker.com/sites/default/files/Docker_CheatSheet_08.09.2016_0.pdf

Start a Jenkins docker container

First you need to pull an image. You can use the plain Docker Jenkins image from https://hub.docker.com/r/jenkins/jenkins/, our adjusted image https://hub.docker.com/r/connydockerid/jenkins-image-nodejs-android/ or build one yourself. If you build on yourself you have to build it on the server with the “docker build …” command, or build it anywhere and upload it to a docker registry like https://hub.docker.com.

docker pull connydockerid/jenkins-image-nodejs-android:latest

Now run the container with the following command:

docker run -p 80:8080 -v /data/jenkins_mount_point:/var/jenkins_home connydockerid/jenkins-image-nodejs-android:latest

Note: Jenkins runs by default on port 8080.

Explained:

docker run […] connydockerid/jenkins-image-nodejs-android:latest starts a container of the provided image

-p 80:8080

Maps the port 8080 of the container to the port 80 of the host (our server). Jenkins will be running on port 8080 inside the container and will be reachable on port 80 on our server with this configuration, e.g. myserver.com:80 (yes, 80 is default, but for the sake of clarity…).

-v /data/jenkins_mount_point:/var/jenkins_home

Mounts the /var/jenkins_home directory to /data/jenkins_mount_point on the host. Jenkins will store all data in this directory. Persisting it will persist the state of Jenkins.

If everything worked out, you should now be able to reach your server.

Useful commands

#Lists all containers, running and not runningnbsp; docker ps -a

# Starts an interactive shell in the container

nbsp; docker exec -it CONTAINER_ID /bin/bash

# Starts|stops a container

nbsp; docker start|stop CONTAINER

Frequent Problems with our virtual server

Request timeout trying to access something in the internet from the server

This could be a firewall issue: Most companies and universities have strict firewall rules which do not allow to communicate on nonstandard ports / protocols. If you have for example an external hosted MongoDB like mlab you will communicate with this database on a nonstandard port like 5342. A timeout can be a hint to a firewall issue.

No internet / no DNS

Maybe an issue with your DNS – any requests using a domain name will fail. Execute the following command to investigate

nbsp; nslookup google.com

If the response is “server can’t find google.com: SERVFAIL” contact you administrator and tell him you DNS does not work.Cannot reach my server from outside

You might have started several containers. Only one of them can occupy the port 80 – you might have used other (nonstandard) ports for the other containers. And there it is again: nonstandard. This could be again a firewall issue. Contact you administrator and ask him to open those ports.

Conclusion

There is no perfect solution to all problems. You have to consider many aspects like project size, project requirements, technologies and your operational environment before setting up a continuous integration and delivery environment. The solution must fit the problem and you should reflect every now and then how (or if) you benefit from the system you set up.

Leave a Reply

You must be logged in to post a comment.